Auction-Based Regulation for Artificial Intelligence

Paper and Code

Oct 02, 2024

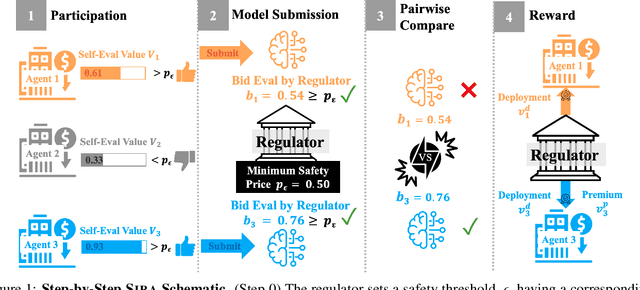

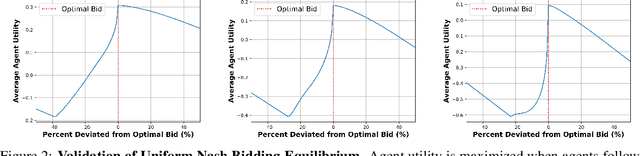

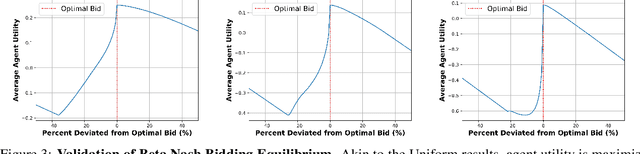

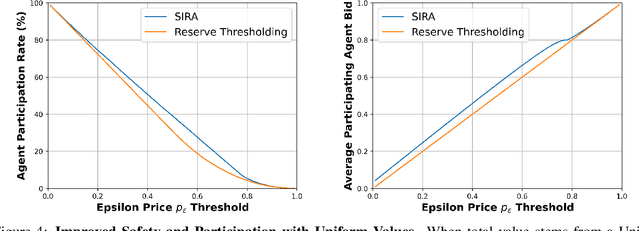

In an era of "moving fast and breaking things", regulators have moved slowly to pick up the safety, bias, and legal pieces left in the wake of broken Artificial Intelligence (AI) deployment. Since AI models, such as large language models, are able to push misinformation and stoke division within our society, it is imperative for regulators to employ a framework that mitigates these dangers and ensures user safety. While there is much-warranted discussion about how to address the safety, bias, and legal woes of state-of-the-art AI models, the number of rigorous and realistic mathematical frameworks to regulate AI safety is lacking. We take on this challenge, proposing an auction-based regulatory mechanism that provably incentivizes model-building agents (i) to deploy safer models and (ii) to participate in the regulation process. We provably guarantee, via derived Nash Equilibria, that each participating agent's best strategy is to submit a model safer than a prescribed minimum-safety threshold. Empirical results show that our regulatory auction boosts safety and participation rates by 20% and 15% respectively, outperforming simple regulatory frameworks that merely enforce minimum safety standards.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge