Attention-based Open RAN Slice Management using Deep Reinforcement Learning

Paper and Code

Jun 15, 2023

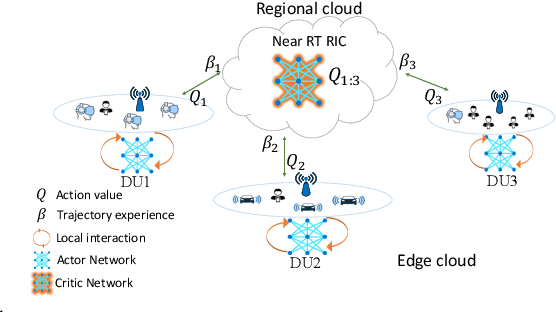

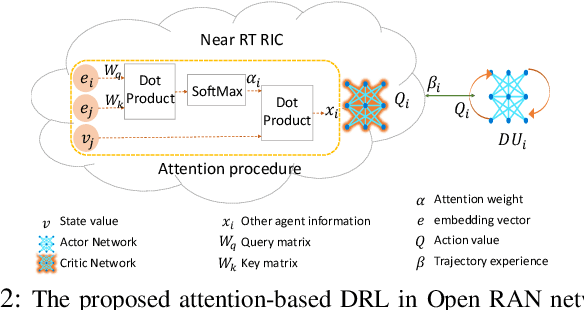

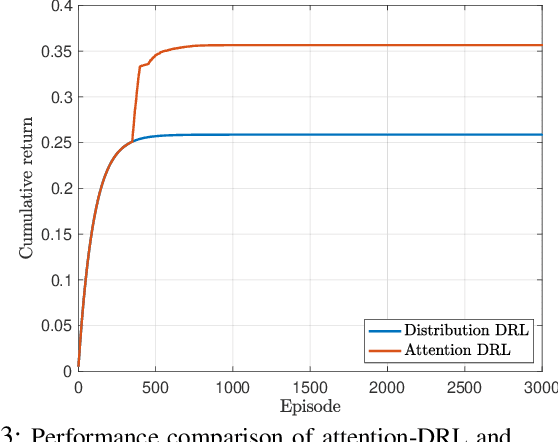

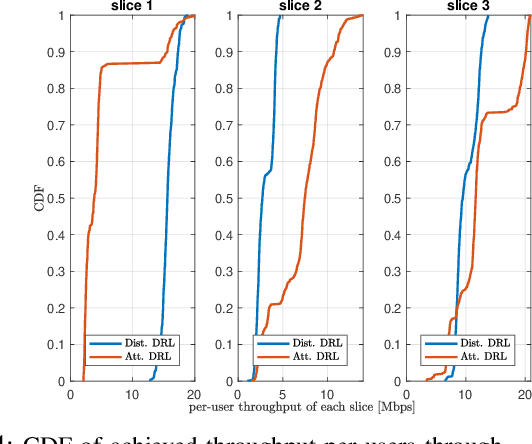

As emerging networks such as Open Radio Access Networks (O-RAN) and 5G continue to grow, the demand for various services with different requirements is increasing. Network slicing has emerged as a potential solution to address the different service requirements. However, managing network slices while maintaining quality of services (QoS) in dynamic environments is a challenging task. Utilizing machine learning (ML) approaches for optimal control of dynamic networks can enhance network performance by preventing Service Level Agreement (SLA) violations. This is critical for dependable decision-making and satisfying the needs of emerging networks. Although RL-based control methods are effective for real-time monitoring and controlling network QoS, generalization is necessary to improve decision-making reliability. This paper introduces an innovative attention-based deep RL (ADRL) technique that leverages the O-RAN disaggregated modules and distributed agent cooperation to achieve better performance through effective information extraction and implementing generalization. The proposed method introduces a value-attention network between distributed agents to enable reliable and optimal decision-making. Simulation results demonstrate significant improvements in network performance compared to other DRL baseline methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge