Attack on Multi-Node Attention for Object Detection

Paper and Code

Aug 16, 2020

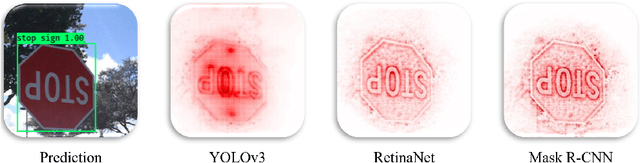

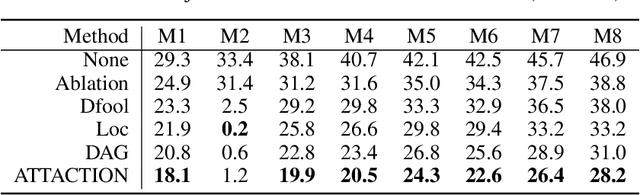

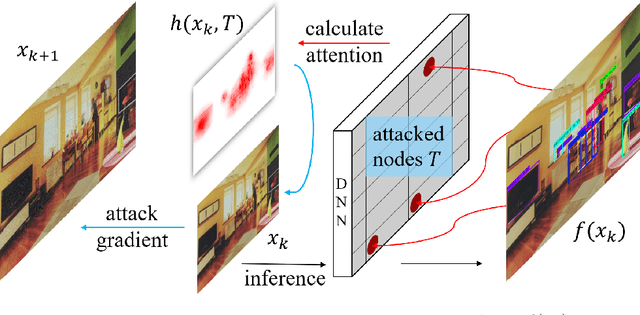

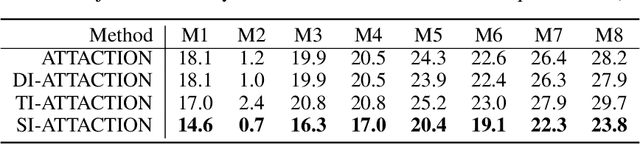

This paper focuses on high-transferable adversarial attacks on detection networks, which are crucial for life-concerning systems such as autonomous driving and security surveillance. Detection networks are hard to attack in a black-box manner, because of their multiple-output property and diversity across architectures. To pursue a high attacking transferability, one needs to find a common property shared by different models. Multi-node attention heat map obtained by our newly proposed method is such a property. Based on it, we design the ATTACk on multi-node attenTION for object detecTION (ATTACTION). ATTACTION achieves a state-of-the-art transferability in numerical experiments. On MS COCO, the detection mAP for all 7 tested black-box architectures is halved and the performance of semantic segmentation is greatly influenced. Given the great transferability of ATTACTION, we generate Adversarial Objects in COntext (AOCO), the first adversarial dataset on object detection networks, which could help designers to quickly evaluate and improve the robustness of detection networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge