Architecture Disentanglement for Deep Neural Networks

Paper and Code

Mar 30, 2020

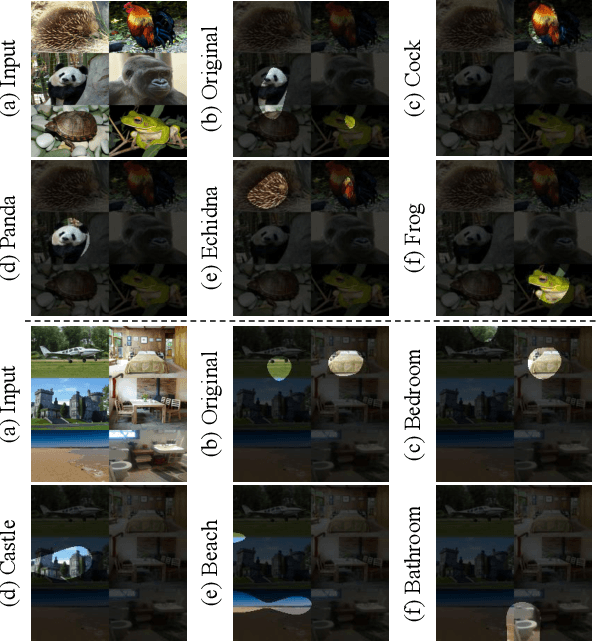

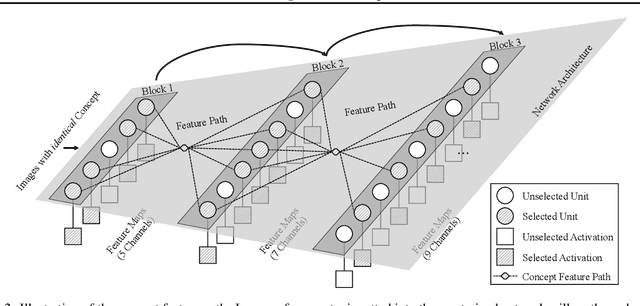

Deep Neural Networks (DNNs) are central to deep learning, and understanding their internal working mechanism is crucial if they are to be used for emerging applications in medical and industrial AI. To this end, the current line of research typically involves linking semantic concepts to a DNN's units or layers. However, this fails to capture the hierarchical inference procedure throughout the network. To address this issue, we introduce the novel concept of Neural Architecture Disentanglement (NAD) in this paper. Specifically, we disentangle a pre-trained network into hierarchical paths corresponding to specific concepts, forming the concept feature paths, i.e., the concept flows from the bottom to top layers of a DNN. Such paths further enable us to quantify the interpretability of DNNs according to the learned diversity of human concepts. We select four types of representative architectures ranging from handcrafted to autoML-based, and conduct extensive experiments on object-based and scene-based datasets. Our NAD sheds important light on the information flow of semantic concepts in DNNs, and provides a fundamental metric that will facilitate the design of interpretable network architectures. Code will be available at: https://github.com/hujiecpp/NAD.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge