AR: Auto-Repair the Synthetic Data for Neural Machine Translation

Paper and Code

Apr 05, 2020

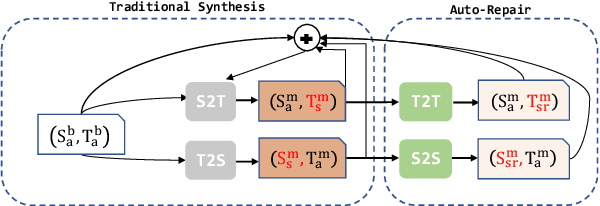

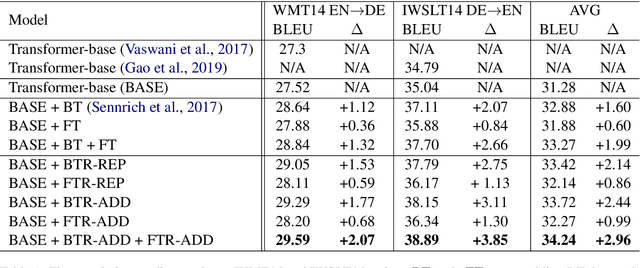

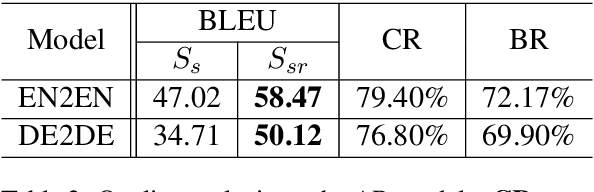

Compared with only using limited authentic parallel data as training corpus, many studies have proved that incorporating synthetic parallel data, which generated by back translation (BT) or forward translation (FT, or selftraining), into the NMT training process can significantly improve translation quality. However, as a well-known shortcoming, synthetic parallel data is noisy because they are generated by an imperfect NMT system. As a result, the improvements in translation quality bring by the synthetic parallel data are greatly diminished. In this paper, we propose a novel Auto- Repair (AR) framework to improve the quality of synthetic data. Our proposed AR model can learn the transformation from low quality (noisy) input sentence to high quality sentence based on large scale monolingual data with BT and FT techniques. The noise in synthetic parallel data will be sufficiently eliminated by the proposed AR model and then the repaired synthetic parallel data can help the NMT models to achieve larger improvements. Experimental results show that our approach can effective improve the quality of synthetic parallel data and the NMT model with the repaired synthetic data achieves consistent improvements on both WMT14 EN!DE and IWSLT14 DE!EN translation tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge