Application of Homomorphic Encryption in Medical Imaging

Paper and Code

Oct 12, 2021

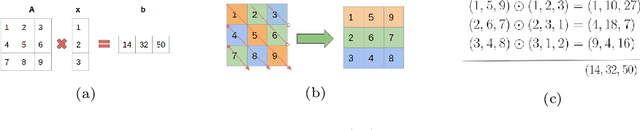

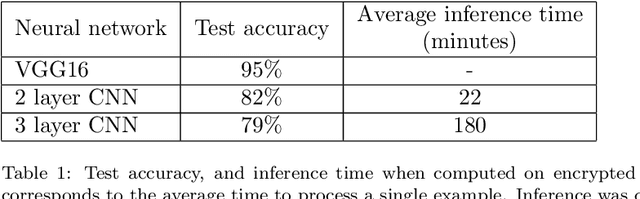

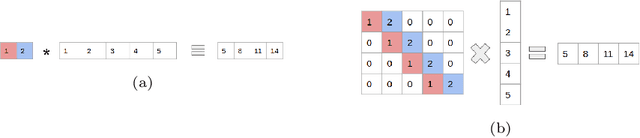

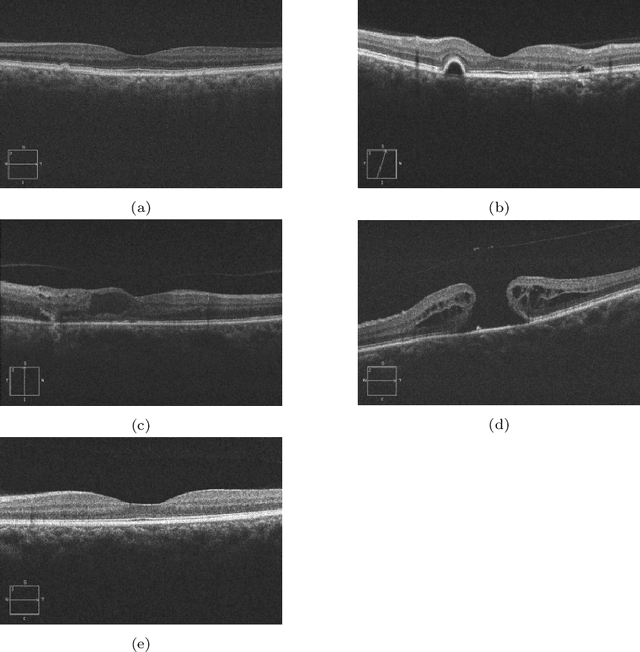

In this technical report, we explore the use of homomorphic encryption (HE) in the context of training and predicting with deep learning (DL) models to deliver strict \textit{Privacy by Design} services, and to enforce a zero-trust model of data governance. First, we show how HE can be used to make predictions over medical images while preventing unauthorized secondary use of data, and detail our results on a disease classification task with OCT images. Then, we demonstrate that HE can be used to secure the training of DL models through federated learning, and report some experiments using 3D chest CT-Scans for a nodule detection task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge