Anomaly Detection for Scalable Task Grouping in Reinforcement Learning-based RAN Optimization

Paper and Code

Dec 06, 2023

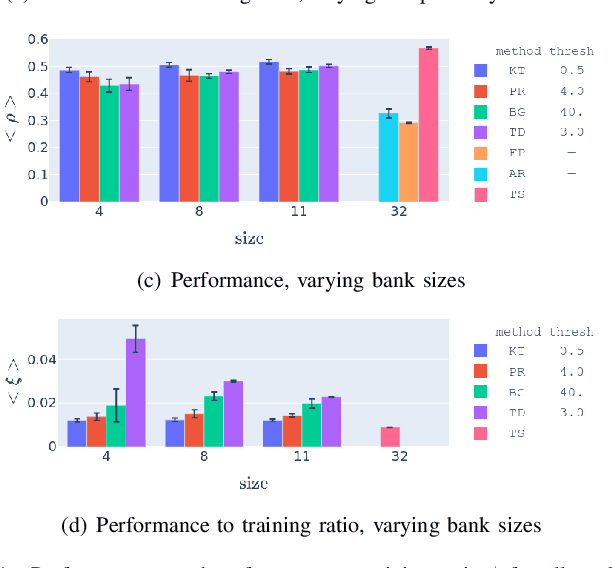

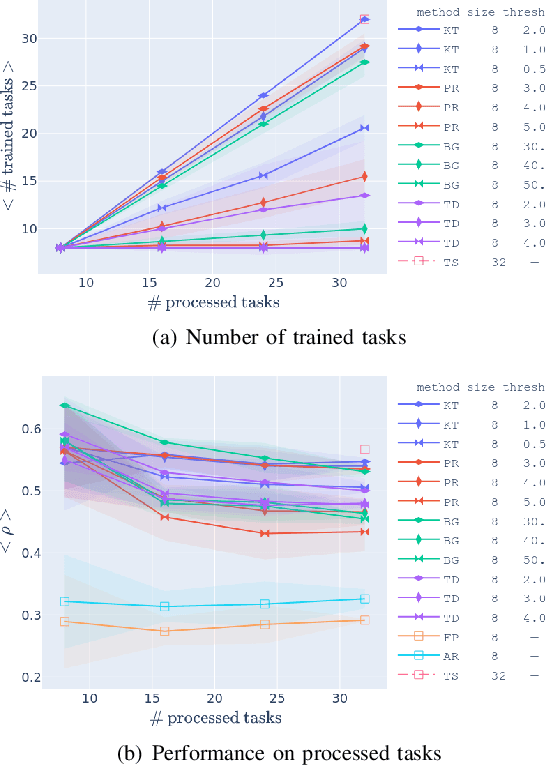

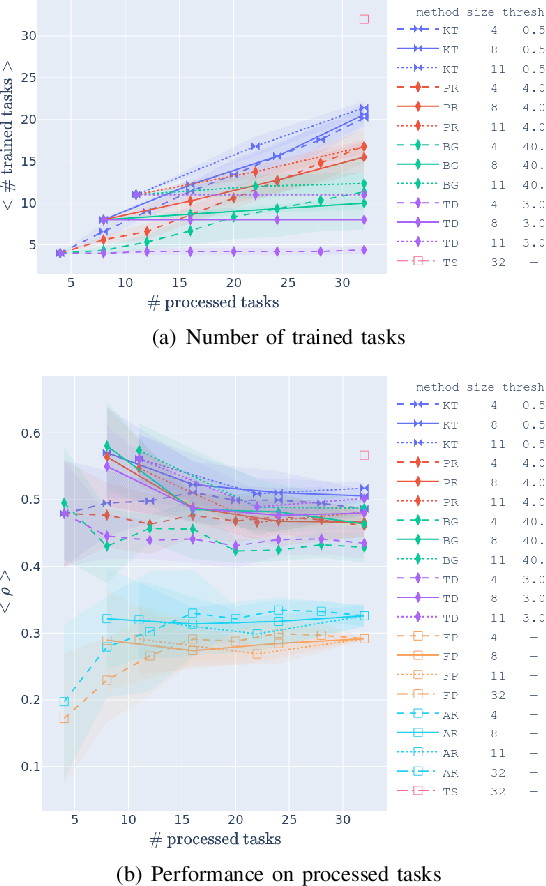

The use of learning-based methods for optimizing cellular radio access networks (RAN) has received increasing attention in recent years. This coincides with a rapid increase in the number of cell sites worldwide, driven largely by dramatic growth in cellular network traffic. Training and maintaining learned models that work well across a large number of cell sites has thus become a pertinent problem. This paper proposes a scalable framework for constructing a reinforcement learning policy bank that can perform RAN optimization across a large number of cell sites with varying traffic patterns. Central to our framework is a novel application of anomaly detection techniques to assess the compatibility between sites (tasks) and the policy bank. This allows our framework to intelligently identify when a policy can be reused for a task, and when a new policy needs to be trained and added to the policy bank. Our results show that our approach to compatibility assessment leads to an efficient use of computational resources, by allowing us to construct a performant policy bank without exhaustively training on all tasks, which makes it applicable under real-world constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge