An Unsupervised Dialogue Topic Segmentation Model Based on Utterance Rewriting

Paper and Code

Sep 12, 2024

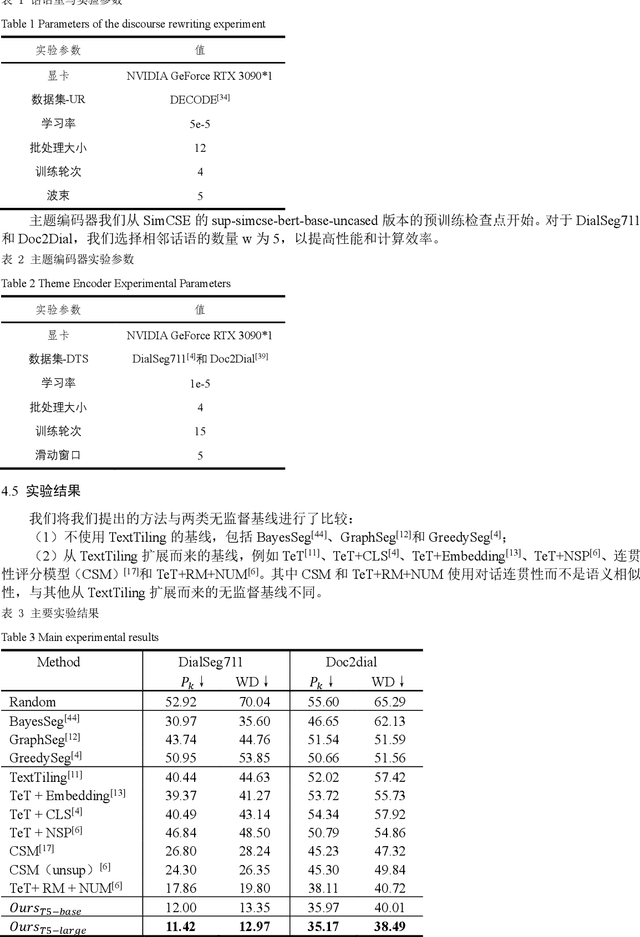

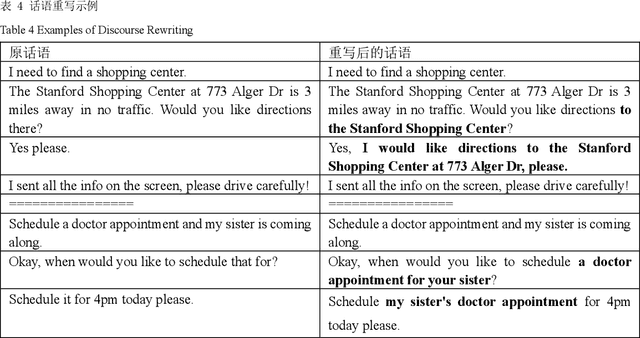

Dialogue topic segmentation plays a crucial role in various types of dialogue modeling tasks. The state-of-the-art unsupervised DTS methods learn topic-aware discourse representations from conversation data through adjacent discourse matching and pseudo segmentation to further mine useful clues in unlabeled conversational relations. However, in multi-round dialogs, discourses often have co-references or omissions, leading to the fact that direct use of these discourses for representation learning may negatively affect the semantic similarity computation in the neighboring discourse matching task. In order to fully utilize the useful cues in conversational relations, this study proposes a novel unsupervised dialog topic segmentation method that combines the Utterance Rewriting (UR) technique with an unsupervised learning algorithm to efficiently utilize the useful cues in unlabeled dialogs by rewriting the dialogs in order to recover the co-referents and omitted words. Compared with existing unsupervised models, the proposed Discourse Rewriting Topic Segmentation Model (UR-DTS) significantly improves the accuracy of topic segmentation. The main finding is that the performance on DialSeg711 improves by about 6% in terms of absolute error score and WD, achieving 11.42% in terms of absolute error score and 12.97% in terms of WD. on Doc2Dial the absolute error score and WD improves by about 3% and 2%, respectively, resulting in SOTA reaching 35.17% in terms of absolute error score and 38.49% in terms of WD. This shows that the model is very effective in capturing the nuances of conversational topics, as well as the usefulness and challenges of utilizing unlabeled conversations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge