An End-to-end Food Portion Estimation Framework Based on Shape Reconstruction from Monocular Image

Paper and Code

Aug 03, 2023

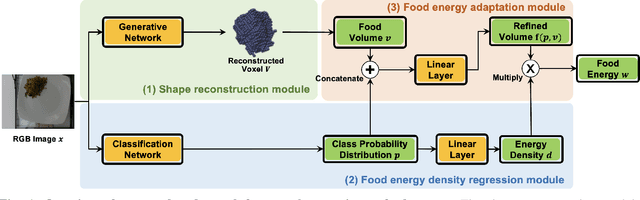

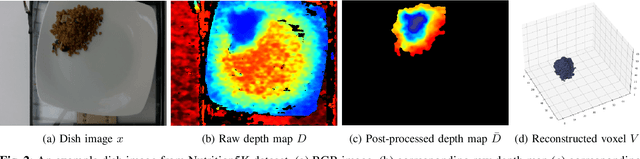

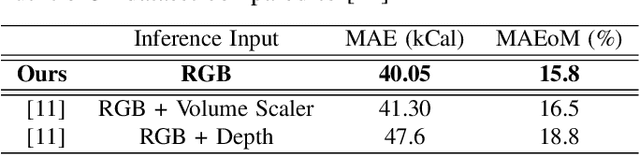

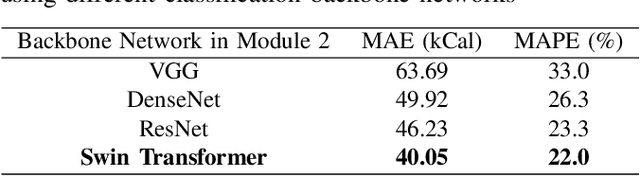

Dietary assessment is a key contributor to monitoring health status. Existing self-report methods are tedious and time-consuming with substantial biases and errors. Image-based food portion estimation aims to estimate food energy values directly from food images, showing great potential for automated dietary assessment solutions. Existing image-based methods either use a single-view image or incorporate multi-view images and depth information to estimate the food energy, which either has limited performance or creates user burdens. In this paper, we propose an end-to-end deep learning framework for food energy estimation from a monocular image through 3D shape reconstruction. We leverage a generative model to reconstruct the voxel representation of the food object from the input image to recover the missing 3D information. Our method is evaluated on a publicly available food image dataset Nutrition5k, resulting a Mean Absolute Error (MAE) of 40.05 kCal and Mean Absolute Percentage Error (MAPE) of 11.47% for food energy estimation. Our method uses RGB image as the only input at the inference stage and achieves competitive results compared to the existing method requiring both RGB and depth information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge