An Anatomy of Graph Neural Networks Going Deep via the Lens of Mutual Information: Exponential Decay vs. Full Preservation

Paper and Code

Oct 22, 2019

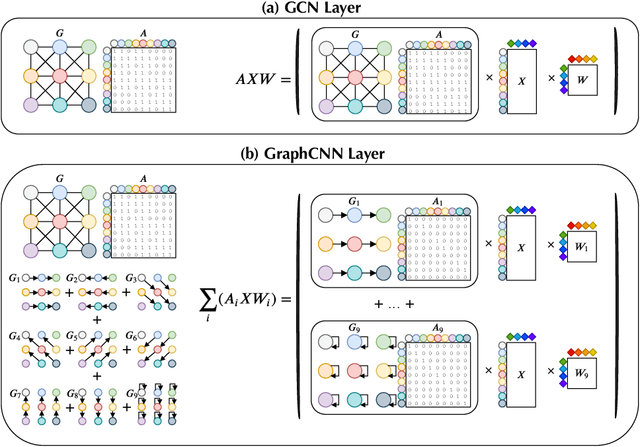

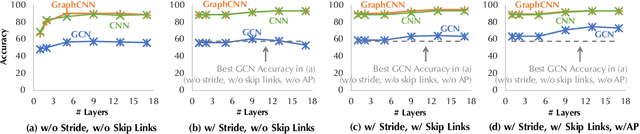

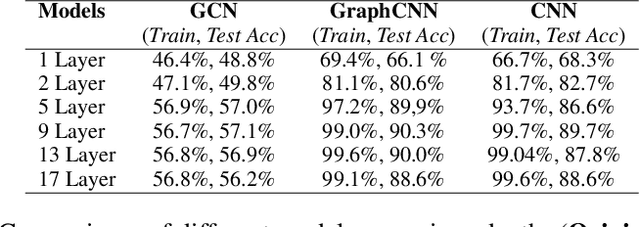

Graph Convolutional Network (GCN) has attracted intensive interests recently. One major limitation of GCN is that it often cannot benefit from using a deep architecture, while traditional CNN and an alternative Graph Neural Network architecture, namely GraphCNN, often achieve better quality with a deeper neural architecture. How can we explain this phenomenon? In this paper, we take the first step towards answering this question. We first conduct a systematic empirical study on the accuracy of GCN, GraphCNN, and ResNet-18 on 2D images and identified relative importance of different factors in architectural design. This inspired a novel theoretical analysis on the mutual information between the input and the output after $l$ GCN and GraphCNN layers. We identified regimes in which GCN suffers exponentially fast information lose and show that GraphCNN requires a much weaker condition for similar behavior to happen.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge