An Alert-Generation Framework for Improving Resiliency in Human-Supervised, Multi-Agent Teams

Paper and Code

Sep 13, 2019

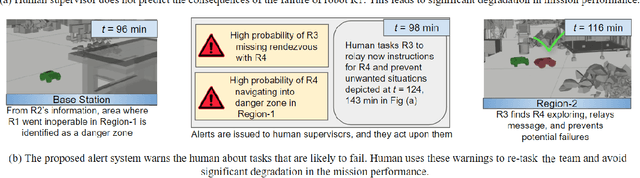

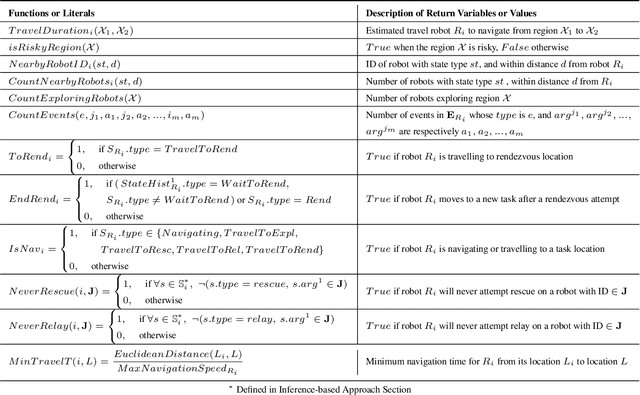

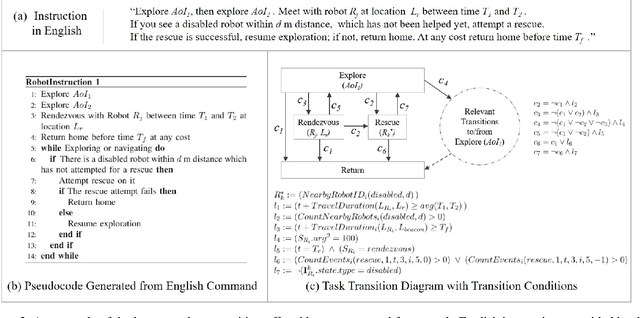

Human-supervision in multi-agent teams is a critical requirement to ensure that the decision-maker's risk preferences are utilized to assign tasks to robots. In stressful complex missions that pose risk to human health and life, such as humanitarian-assistance and disaster-relief missions, human mistakes or delays in tasking robots can adversely affect the mission. To assist human decision making in such missions, we present an alert-generation framework capable of detecting various modes of potential failure or performance degradation. We demonstrate that our framework, based on state machine simulation and formal methods, offers probabilistic modeling to estimate the likelihood of unfavorable events. We introduce smart simulation that offers a computationally-efficient way of detecting low-probability situations compared to standard Monte-Carlo simulations. Moreover, for certain class of problems, our inference-based method can provide guarantees on correctly detecting task failures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge