AgentMixer: Multi-Agent Correlated Policy Factorization

Paper and Code

Jan 16, 2024

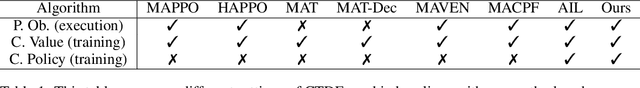

Centralized training with decentralized execution (CTDE) is widely employed to stabilize partially observable multi-agent reinforcement learning (MARL) by utilizing a centralized value function during training. However, existing methods typically assume that agents make decisions based on their local observations independently, which may not lead to a correlated joint policy with sufficient coordination. Inspired by the concept of correlated equilibrium, we propose to introduce a \textit{strategy modification} to provide a mechanism for agents to correlate their policies. Specifically, we present a novel framework, AgentMixer, which constructs the joint fully observable policy as a non-linear combination of individual partially observable policies. To enable decentralized execution, one can derive individual policies by imitating the joint policy. Unfortunately, such imitation learning can lead to \textit{asymmetric learning failure} caused by the mismatch between joint policy and individual policy information. To mitigate this issue, we jointly train the joint policy and individual policies and introduce \textit{Individual-Global-Consistency} to guarantee mode consistency between the centralized and decentralized policies. We then theoretically prove that AgentMixer converges to an $\epsilon$-approximate Correlated Equilibrium. The strong experimental performance on three MARL benchmarks demonstrates the effectiveness of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge