AFD-Net: Adaptive Fully-Dual Network for Few-Shot Object Detection

Paper and Code

Nov 30, 2020

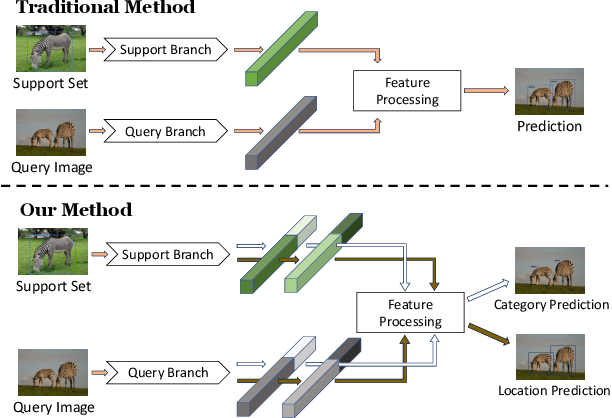

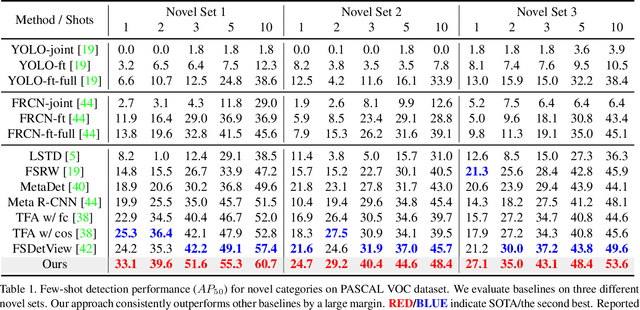

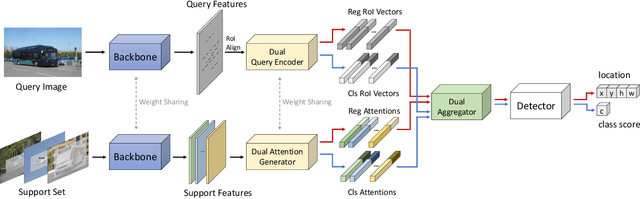

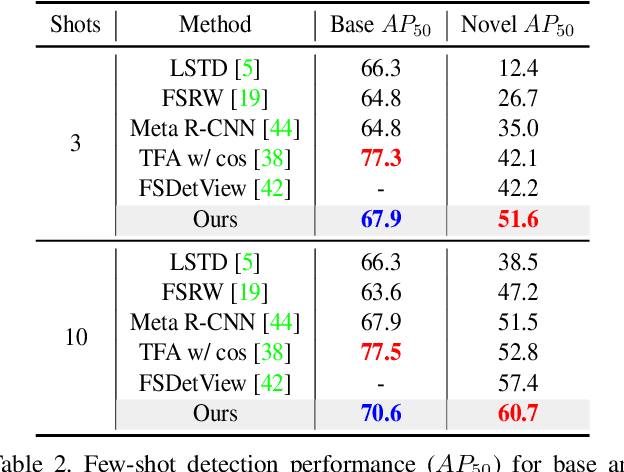

Few-shot object detection (FSOD) aims at learning a detector that can fast adapt to previously unseen objects with scarce annotated examples, which is challenging and demanding. Existing methods solve this problem by performing subtasks of classification and localization utilizing a shared component (e.g., RoI head) in a detector, yet few of them take the preference difference in embedding space of two subtasks into consideration. In this paper, we carefully analyze the characteristics of FSOD and present that a general few-shot detector should consider the explicit decomposition of two subtasks, and leverage information from both of them for enhancing feature representations. To the end, we propose a simple yet effective Adaptive Fully-Dual Network (AFD-Net). Specifically, we extend Faster R-CNN by introducing Dual Query Encoder and Dual Attention Generator for separate feature extraction, and Dual Aggregator for separate model reweighting. Spontaneously, separate decision making is achieved with the R-CNN detector. Besides, for the acquisition of enhanced feature representations, we further introduce Adaptive Fusion Mechanism to adaptively perform feature fusion suitable for the specific subtask. Extensive experiments on PASCAL VOC and MS COCO in various settings show that, our method achieves new state-of-the-art performance by a large margin, demonstrating its effectiveness and generalization ability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge