Adversary-resilient Inference and Machine Learning: From Distributed to Decentralized

Paper and Code

Aug 23, 2019

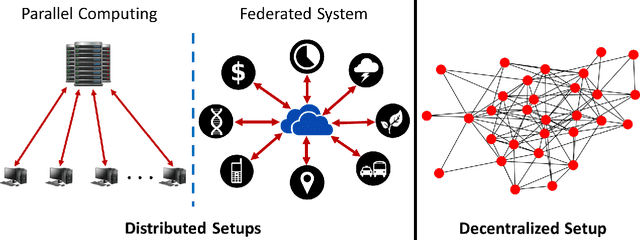

While the last few decades have witnessed a huge body of work devoted to inference and learning in distributed and decentralized setups, much of this work assumes a non-adversarial setting in which individual nodes---apart from occasional statistical failures---operate as intended within the algorithmic framework. In recent years, however, cybersecurity threats from malicious non-state actors and rogue nations have forced practitioners and researchers to rethink the robustness of distributed and decentralized algorithms against adversarial attacks. As a result, we now have a plethora of algorithmic approaches that guarantee robustness of distributed and/or decentralized inference and learning under different adversarial threat models. Driven in part by the world's growing appetite for data-driven decision making, however, securing of distributed/decentralized frameworks for inference and learning against adversarial threats remains a rapidly evolving research area. In this article, we provide an overview of some of the most recent developments in this area under the threat model of Byzantine attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge