Adversarial Task-Specific Privacy Preservation under Attribute Attack

Paper and Code

Jun 19, 2019

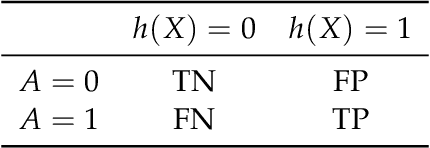

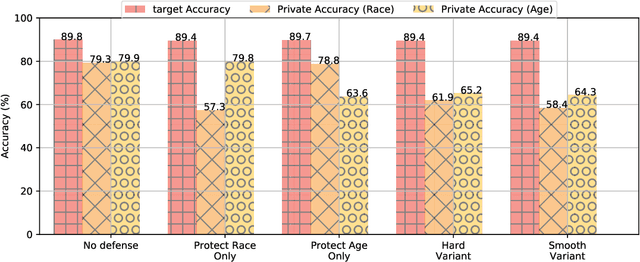

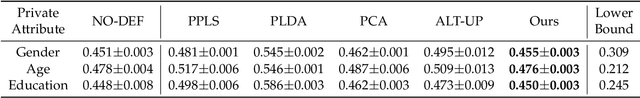

With the prevalence of machine learning services, crowdsourced data containing sensitive information poses substantial privacy challenges. Existing works focusing on protecting against membership inference attacks under the rigorous notion of differential privacy are susceptible to attribute inference attacks. In this paper, we develop a theoretical framework for task-specific privacy under the attack of attribute inference. Under our framework, we propose a minimax optimization formulation with a practical algorithm to protect a given attribute and preserve utility. We also extend our formulation so that multiple attributes could be simultaneously protected. Theoretically, we prove an information-theoretic lower bound to characterize the inherent tradeoff between utility and privacy when they are correlated. Empirically, we conduct experiments with real-world tasks that demonstrate the effectiveness of our method compared with state-of-the-art baseline approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge