Adversarial Subword Regularization for Robust Neural Machine Translation

Paper and Code

Apr 29, 2020

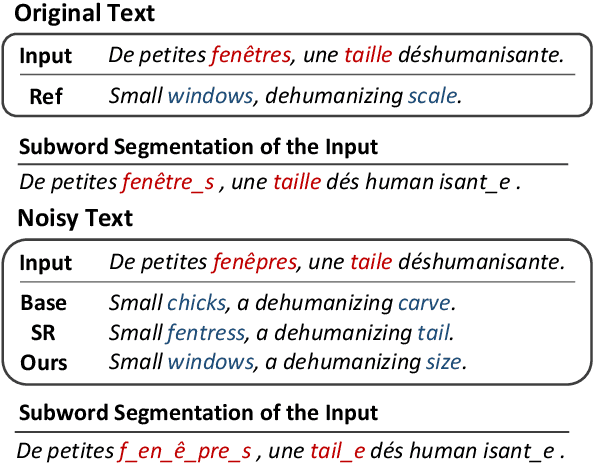

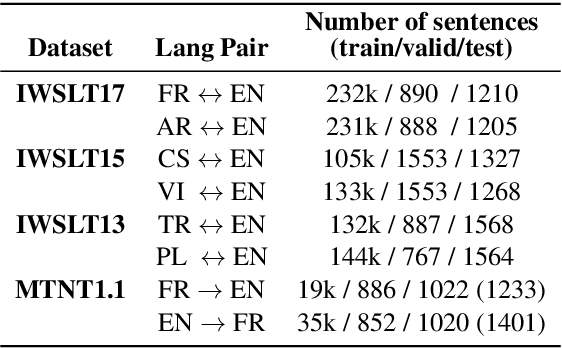

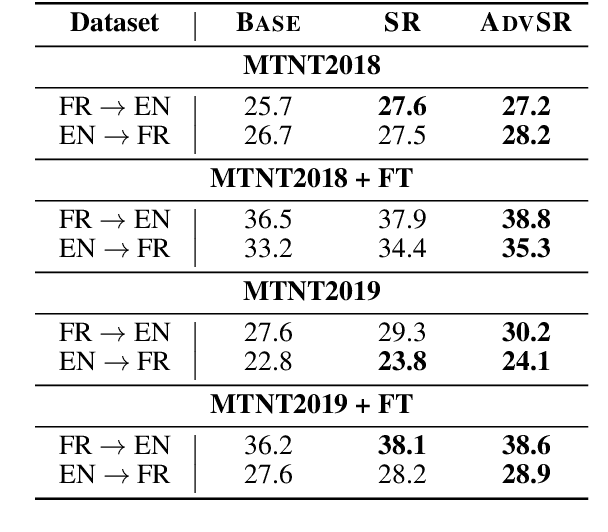

Exposing diverse subword segmentations to neural machine translation (NMT) models often improves the robustness of machine translation. As NMT models experience various subword candidates, they become more robust to segmentation errors. However, the distribution of subword segmentations heavily relies on the subword language models from which erroneous segmentations of unseen words are less likely to be sampled. In this paper, we present adversarial subword regularization (ADVSR) to study whether gradient signals during training can be a substitute criterion for choosing segmentation among candidates. We experimentally show that our model-based adversarial samples effectively encourage NMT models to be less sensitive to segmentation errors and improve the robustness of NMT models in low-resource datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge