Additive Tree Ensembles: Reasoning About Potential Instances

Paper and Code

Jan 31, 2020

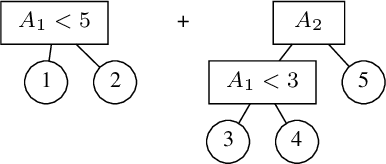

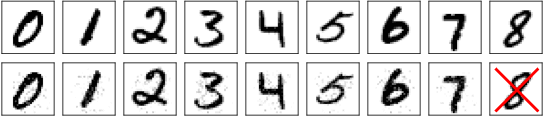

Imagine being able to ask questions to a black box model such as "Which adversarial examples exist?", "Does a specific attribute have a disproportionate effect on the model's prediction?" or "What kind of predictions are possible for a partially described example?" This last question is particularly important if your partial description does not correspond to any observed example in your data, as it provides insight into how the model will extrapolate to unseen data. These capabilities would be extremely helpful as it would allow a user to better understand the model's behavior, particularly as it relates to issues such as robustness, fairness, and bias. In this paper, we propose such an approach for an ensemble of trees. Since, in general, this task is intractable we present a strategy that (1) can prune part of the input space given the question asked to simplify the problem; and (2) follows a divide and conquer approach that is incremental and can always return some answers and indicates which parts of the input domains are still uncertain. The usefulness of our approach is shown on a diverse set of use cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge