Adaptive Online Incremental Learning for Evolving Data Streams

Paper and Code

Jan 05, 2022

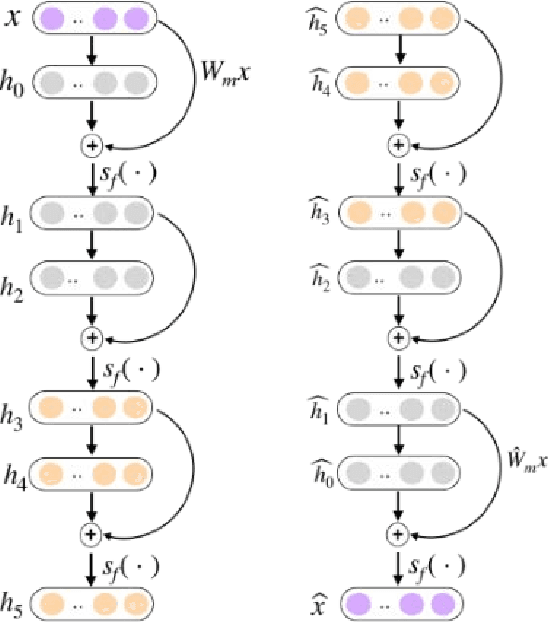

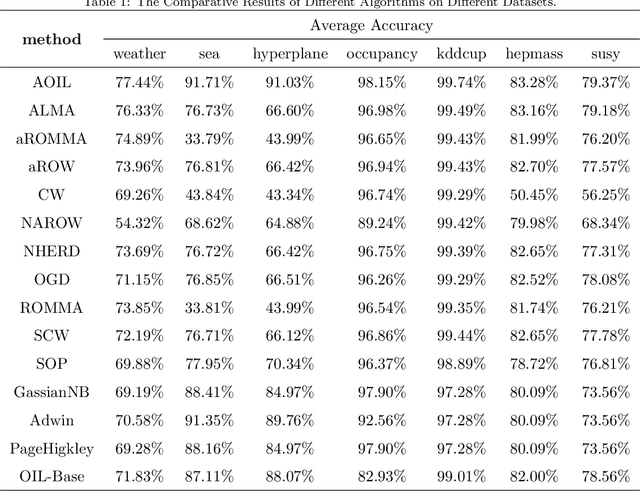

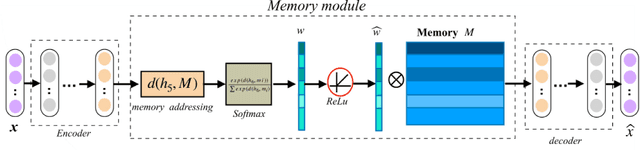

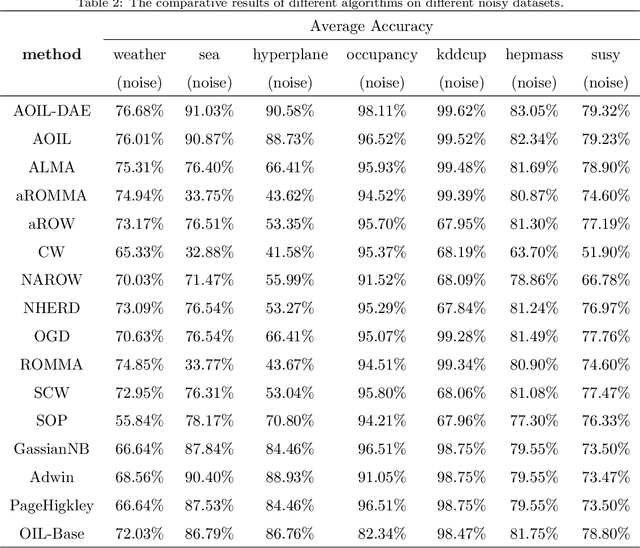

Recent years have witnessed growing interests in online incremental learning. However, there are three major challenges in this area. The first major difficulty is concept drift, that is, the probability distribution in the streaming data would change as the data arrives. The second major difficulty is catastrophic forgetting, that is, forgetting what we have learned before when learning new knowledge. The last one we often ignore is the learning of the latent representation. Only good latent representation can improve the prediction accuracy of the model. Our research builds on this observation and attempts to overcome these difficulties. To this end, we propose an Adaptive Online Incremental Learning for evolving data streams (AOIL). We use auto-encoder with the memory module, on the one hand, we obtained the latent features of the input, on the other hand, according to the reconstruction loss of the auto-encoder with memory module, we could successfully detect the existence of concept drift and trigger the update mechanism, adjust the model parameters in time. In addition, we divide features, which are derived from the activation of the hidden layers, into two parts, which are used to extract the common and private features respectively. By means of this approach, the model could learn the private features of the new coming instances, but do not forget what we have learned in the past (shared features), which reduces the occurrence of catastrophic forgetting. At the same time, to get the fusion feature vector we use the self-attention mechanism to effectively fuse the extracted features, which further improved the latent representation learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge