ACEnet: Anatomical Context-Encoding Network for Neuroanatomy Segmentation

Paper and Code

Feb 13, 2020

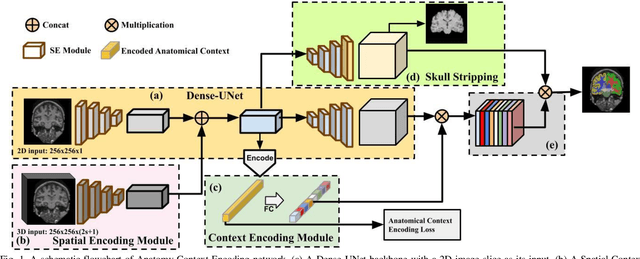

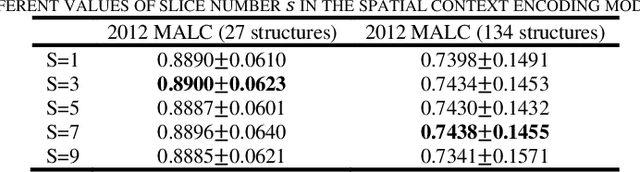

Segmentation of brain structures from magnetic resonance (MR) scans plays an important role in the quantification of brain morphology. Since 3D deep learning models suffer from high computational cost, 2D deep learning methods are favored for their computational efficiency. However, existing 2D deep learning methods are not equipped to effectively capture 3D spatial contextual information that is needed to achieve accurate brain structure segmentation. In order to overcome this limitation, we develop an Anatomical Context-Encoding Network (ACEnet) to incorporate 3D spatial and anatomical contexts in 2D convolutional neural networks (CNNs) for efficient and accurate segmentation of brain structures from MR scans, consisting of 1) an anatomical context encoding module to incorporate anatomical information in 2D CNNs, 2) a spatial context encoding module to integrate 3D image information in 2D CNNs, and 3) a skull stripping module to guide 2D CNNs to attend to the brain. Extensive experiments on three benchmark datasets have demonstrated that our method outperforms state-of-the-art alternative methods for brain structure segmentation in terms of both computational efficiency and segmentation accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge