A Question-answering Based Framework for Relation Extraction Validation

Paper and Code

Apr 07, 2021

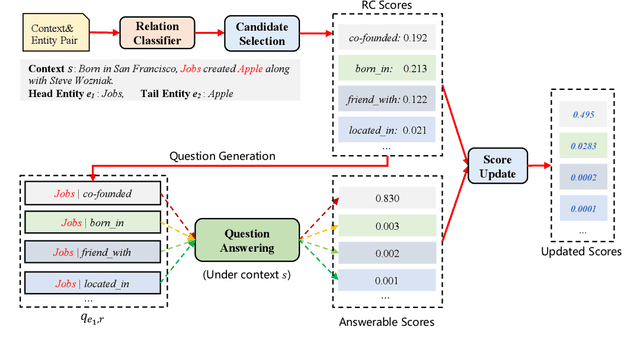

Relation extraction is an important task in knowledge acquisition and text understanding. Existing works mainly focus on improving relation extraction by extracting effective features or designing reasonable model structures. However, few works have focused on how to validate and correct the results generated by the existing relation extraction models. We argue that validation is an important and promising direction to further improve the performance of relation extraction. In this paper, we explore the possibility of using question answering as validation. Specifically, we propose a novel question-answering based framework to validate the results from relation extraction models. Our proposed framework can be easily applied to existing relation classifiers without any additional information. We conduct extensive experiments on the popular NYT dataset to evaluate the proposed framework, and observe consistent improvements over five strong baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge