A Near-Optimal Change-Detection Based Algorithm for Piecewise-Stationary Combinatorial Semi-Bandits

Paper and Code

Aug 29, 2019

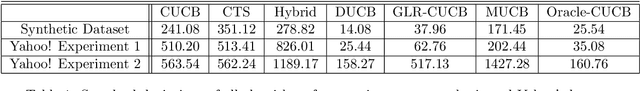

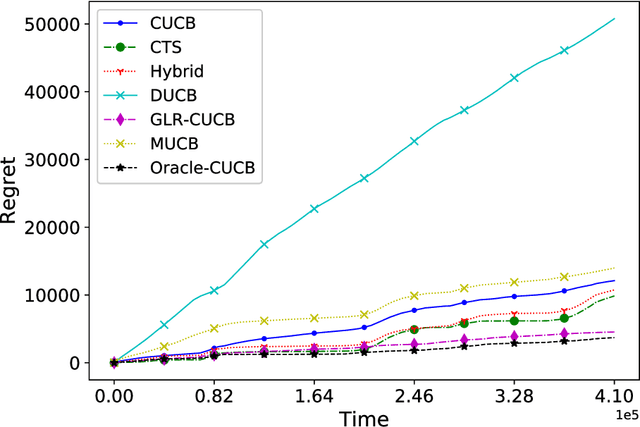

We investigate the piecewise-stationary combinatorial semi-bandit problem. Compared to the original combinatorial semi-bandit problem, our setting assumes the reward distributions of base arms may change in a piecewise-stationary manner at unknown time steps. We propose an algorithm, \texttt{GLR-CUCB}, which incorporates an efficient combinatorial semi-bandit algorithm, \texttt{CUCB}, with an almost parameter-free change-point detector, the \emph{Generalized Likelihood Ratio Test} (GLRT). Our analysis shows that the regret of \texttt{GLR-CUCB} is upper bounded by $\mathcal{O}(\sqrt{NKT\log{T}})$, where $N$ is the number of piecewise-stationary segments, $K$ is the number of base arms, and $T$ is the number of time steps. As a complement, we also derive a nearly matching regret lower bound on the order of $\Omega(\sqrt{NKT}$), for both piecewise-stationary multi-armed bandits and combinatorial semi-bandits, using information-theoretic techniques and judiciously constructed piecewise-stationary bandit instances. Our lower bound is tighter than the best available regret lower bound, which is $\Omega(\sqrt{T})$. Numerical experiments on both synthetic and real-world datasets demonstrate the superiority of \texttt{GLR-CUCB} compared to other state-of-the-art algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge