A Multimodal Object-level Contrast Learning Method for Cancer Survival Risk Prediction

Paper and Code

Sep 03, 2024

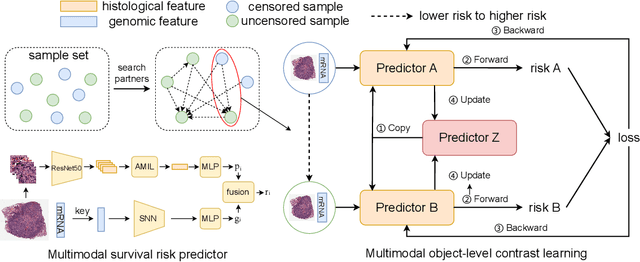

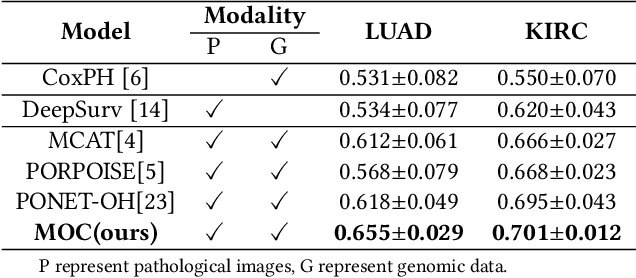

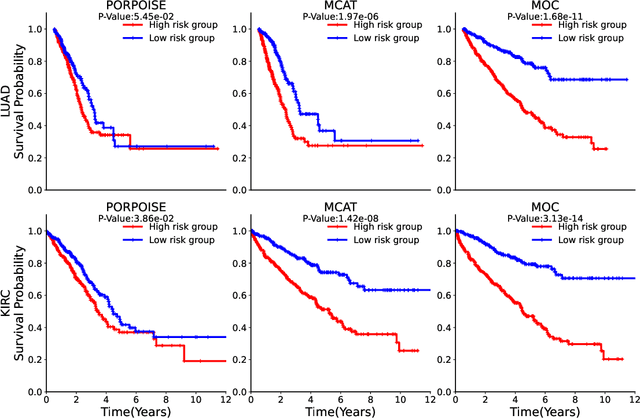

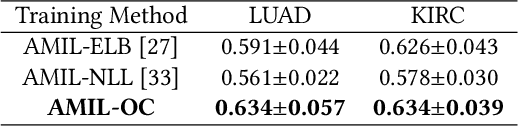

Computer-aided cancer survival risk prediction plays an important role in the timely treatment of patients. This is a challenging weakly supervised ordinal regression task associated with multiple clinical factors involved such as pathological images, genomic data and etc. In this paper, we propose a new training method, multimodal object-level contrast learning, for cancer survival risk prediction. First, we construct contrast learning pairs based on the survival risk relationship among the samples in the training sample set. Then we introduce the object-level contrast learning method to train the survival risk predictor. We further extend it to the multimodal scenario by applying cross-modal constrast. Considering the heterogeneity of pathological images and genomics data, we construct a multimodal survival risk predictor employing attention-based and self-normalizing based nerural network respectively. Finally, the survival risk predictor trained by our proposed method outperforms state-of-the-art methods on two public multimodal cancer datasets for survival risk prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge