A Minibatch-SGD-Based Learning Meta-Policy for Inventory Systems with Myopic Optimal Policy

Paper and Code

Aug 29, 2024

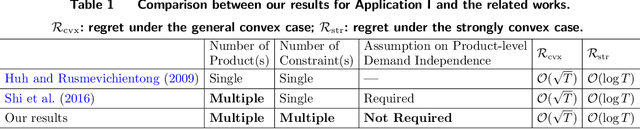

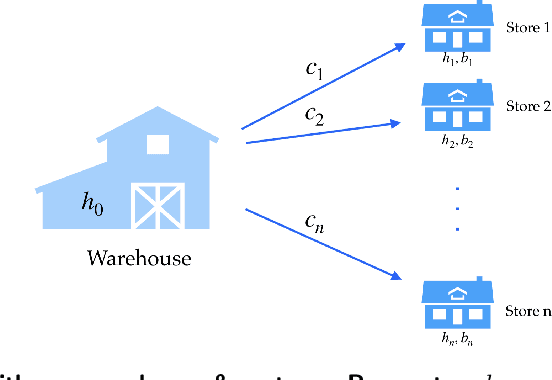

Stochastic gradient descent (SGD) has proven effective in solving many inventory control problems with demand learning. However, it often faces the pitfall of an infeasible target inventory level that is lower than the current inventory level. Several recent works (e.g., Huh and Rusmevichientong (2009), Shi et al.(2016)) are successful to resolve this issue in various inventory systems. However, their techniques are rather sophisticated and difficult to be applied to more complicated scenarios such as multi-product and multi-constraint inventory systems. In this paper, we address the infeasible-target-inventory-level issue from a new technical perspective -- we propose a novel minibatch-SGD-based meta-policy. Our meta-policy is flexible enough to be applied to a general inventory systems framework covering a wide range of inventory management problems with myopic clairvoyant optimal policy. By devising the optimal minibatch scheme, our meta-policy achieves a regret bound of $\mathcal{O}(\sqrt{T})$ for the general convex case and $\mathcal{O}(\log T)$ for the strongly convex case. To demonstrate the power and flexibility of our meta-policy, we apply it to three important inventory control problems: multi-product and multi-constraint systems, multi-echelon serial systems, and one-warehouse and multi-store systems by carefully designing application-specific subroutines.We also conduct extensive numerical experiments to demonstrate that our meta-policy enjoys competitive regret performance, high computational efficiency, and low variances among a wide range of applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge