A Converting Autoencoder Toward Low-latency and Energy-efficient DNN Inference at the Edge

Paper and Code

Mar 11, 2024

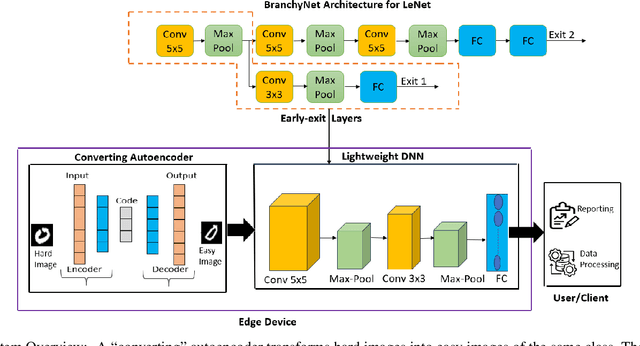

Reducing inference time and energy usage while maintaining prediction accuracy has become a significant concern for deep neural networks (DNN) inference on resource-constrained edge devices. To address this problem, we propose a novel approach based on "converting" autoencoder and lightweight DNNs. This improves upon recent work such as early-exiting framework and DNN partitioning. Early-exiting frameworks spend different amounts of computation power for different input data depending upon their complexity. However, they can be inefficient in real-world scenarios that deal with many hard image samples. On the other hand, DNN partitioning algorithms that utilize the computation power of both the cloud and edge devices can be affected by network delays and intermittent connections between the cloud and the edge. We present CBNet, a low-latency and energy-efficient DNN inference framework tailored for edge devices. It utilizes a "converting" autoencoder to efficiently transform hard images into easy ones, which are subsequently processed by a lightweight DNN for inference. To the best of our knowledge, such autoencoder has not been proposed earlier. Our experimental results using three popular image-classification datasets on a Raspberry Pi 4, a Google Cloud instance, and an instance with Nvidia Tesla K80 GPU show that CBNet achieves up to 4.8x speedup in inference latency and 79% reduction in energy usage compared to competing techniques while maintaining similar or higher accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge