A Continuous-Time View of Early Stopping for Least Squares Regression

Paper and Code

Oct 23, 2018

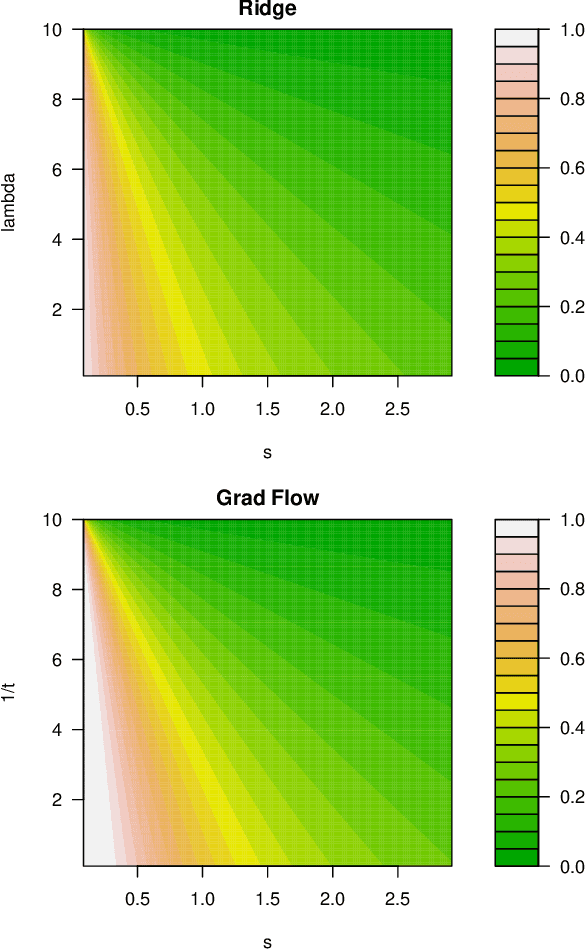

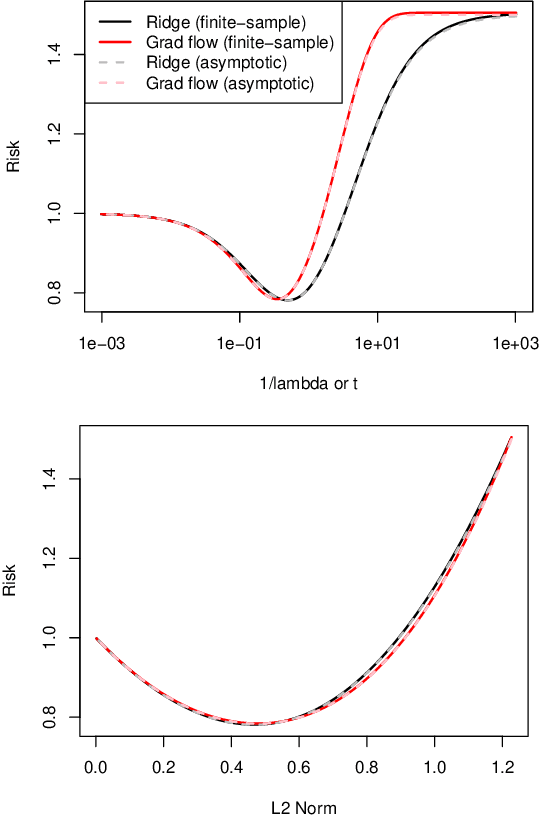

We study the statistical properties of the iterates generated by gradient descent, applied to the fundamental problem of least squares regression. We take a continuous-time view, i.e., consider infinitesimal step sizes in gradient descent, in which case the iterates form a trajectory called gradient flow. In a random matrix theory setup, which allows the number of samples $n$ and features $p$ to diverge in such a way that $p/n \to \gamma \in (0,\infty)$, we derive and analyze an asymptotic risk expression for gradient flow. In particular, we compare the asymptotic risk profile of gradient flow to that of ridge regression. When the feature covariance is spherical, we show that the optimal asymptotic gradient flow risk is between 1 and 1.25 times the optimal asymptotic ridge risk. Further, we derive a calibration between the two risk curves under which the asymptotic gradient flow risk no more than 2.25 times the asymptotic ridge risk, at all points along the path. We present a number of other results illustrating the connections between gradient flow and $\ell_2$ regularization, and numerical experiments that support our theory.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge