A Channel-ensemble Approach: Unbiased and Low-variance Pseudo-labels is Critical for Semi-supervised Classification

Paper and Code

Mar 27, 2024

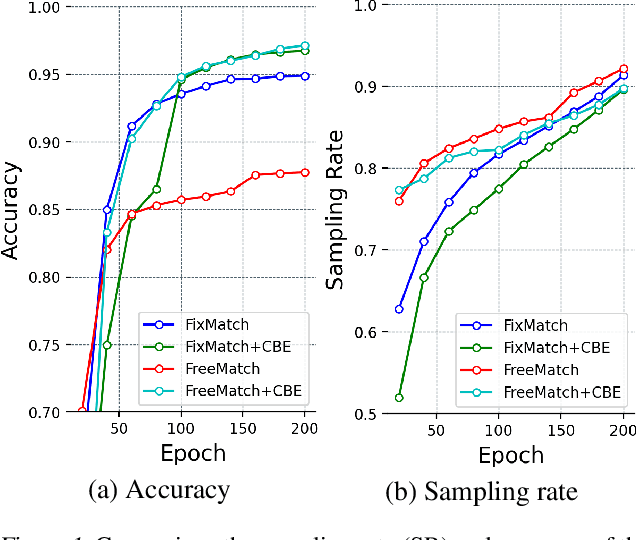

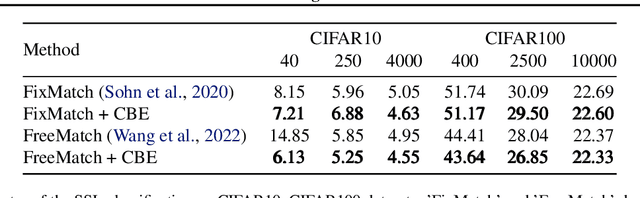

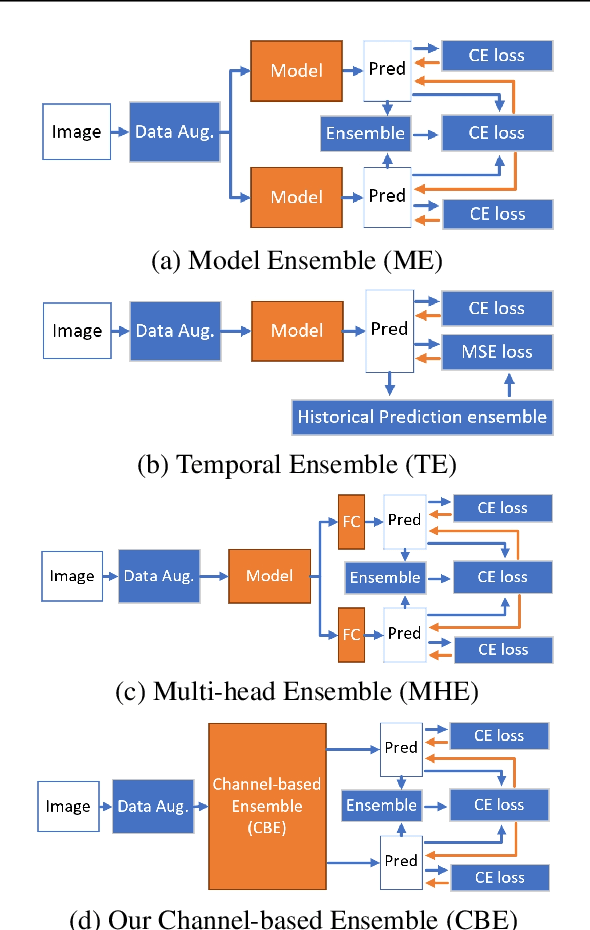

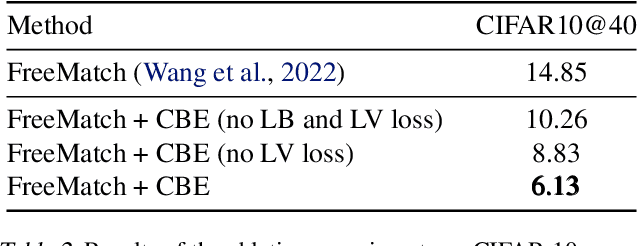

Semi-supervised learning (SSL) is a practical challenge in computer vision. Pseudo-label (PL) methods, e.g., FixMatch and FreeMatch, obtain the State Of The Art (SOTA) performances in SSL. These approaches employ a threshold-to-pseudo-label (T2L) process to generate PLs by truncating the confidence scores of unlabeled data predicted by the self-training method. However, self-trained models typically yield biased and high-variance predictions, especially in the scenarios when a little labeled data are supplied. To address this issue, we propose a lightweight channel-based ensemble method to effectively consolidate multiple inferior PLs into the theoretically guaranteed unbiased and low-variance one. Importantly, our approach can be readily extended to any SSL framework, such as FixMatch or FreeMatch. Experimental results demonstrate that our method significantly outperforms state-of-the-art techniques on CIFAR10/100 in terms of effectiveness and efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge