liangjian Wen

AFINet: Attentive Feature Integration Networks for Image Classification

May 10, 2021

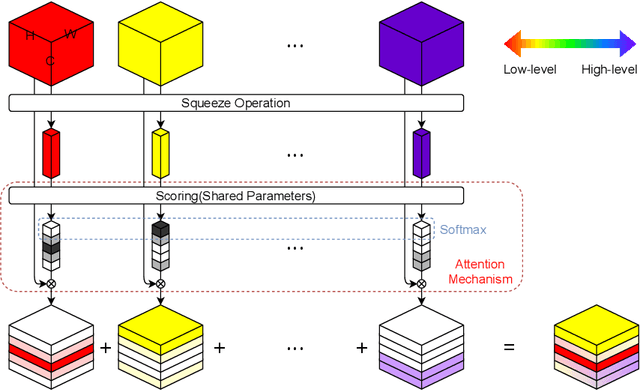

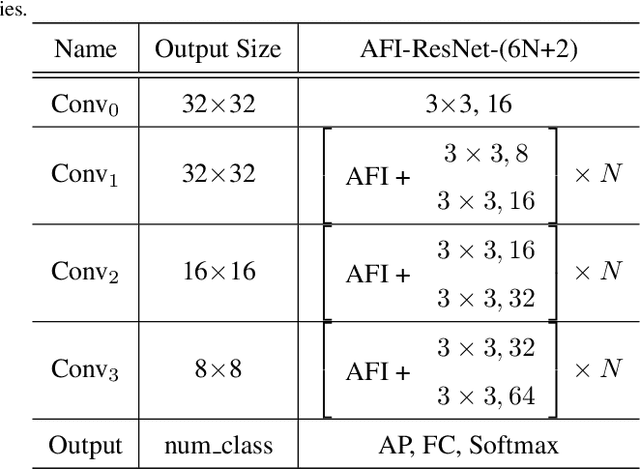

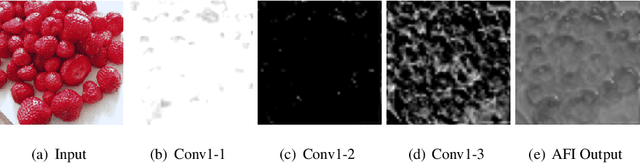

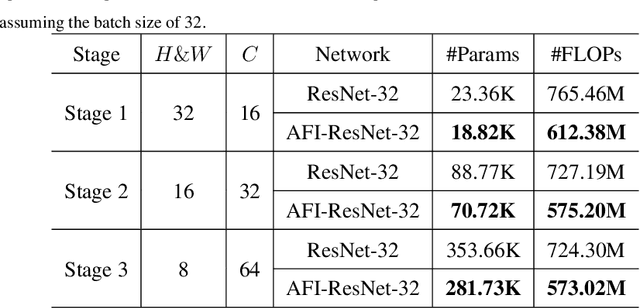

Abstract:Convolutional Neural Networks (CNNs) have achieved tremendous success in a number of learning tasks including image classification. Recent advanced models in CNNs, such as ResNets, mainly focus on the skip connection to avoid gradient vanishing. DenseNet designs suggest creating additional bypasses to transfer features as an alternative strategy in network design. In this paper, we design Attentive Feature Integration (AFI) modules, which are widely applicable to most recent network architectures, leading to new architectures named AFI-Nets. AFI-Nets explicitly model the correlations among different levels of features and selectively transfer features with a little overhead.AFI-ResNet-152 obtains a 1.24% relative improvement on the ImageNet dataset while decreases the FLOPs by about 10% and the number of parameters by about 9.2% compared to ResNet-152.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge