Zoe Porter

The case for delegated AI autonomy for Human AI teaming in healthcare

Mar 24, 2025Abstract:In this paper we propose an advanced approach to integrating artificial intelligence (AI) into healthcare: autonomous decision support. This approach allows the AI algorithm to act autonomously for a subset of patient cases whilst serving a supportive role in other subsets of patient cases based on defined delegation criteria. By leveraging the complementary strengths of both humans and AI, it aims to deliver greater overall performance than existing human-AI teaming models. It ensures safe handling of patient cases and potentially reduces clinician review time, whilst being mindful of AI tool limitations. After setting the approach within the context of current human-AI teaming models, we outline the delegation criteria and apply them to a specific AI-based tool used in histopathology. The potential impact of the approach and the regulatory requirements for its successful implementation are then discussed.

Unravelling Responsibility for AI

Aug 04, 2023

Abstract:To reason about where responsibility does and should lie in complex situations involving AI-enabled systems, we first need a sufficiently clear and detailed cross-disciplinary vocabulary for talking about responsibility. Responsibility is a triadic relation involving an actor, an occurrence, and a way of being responsible. As part of a conscious effort towards 'unravelling' the concept of responsibility to support practical reasoning about responsibility for AI, this paper takes the three-part formulation, 'Actor A is responsible for Occurrence O' and identifies valid combinations of subcategories of A, is responsible for, and O. These valid combinations - which we term "responsibility strings" - are grouped into four senses of responsibility: role-responsibility; causal responsibility; legal liability-responsibility; and moral responsibility. They are illustrated with two running examples, one involving a healthcare AI-based system and another the fatal collision of an AV with a pedestrian in Tempe, Arizona in 2018. The output of the paper is 81 responsibility strings. The aim is that these strings provide the vocabulary for people across disciplines to be clear and specific about the different ways that different actors are responsible for different occurrences within a complex event for which responsibility is sought, allowing for precise and targeted interdisciplinary normative deliberations.

A Principle-based Ethical Assurance Argument for AI and Autonomous Systems

Mar 29, 2022

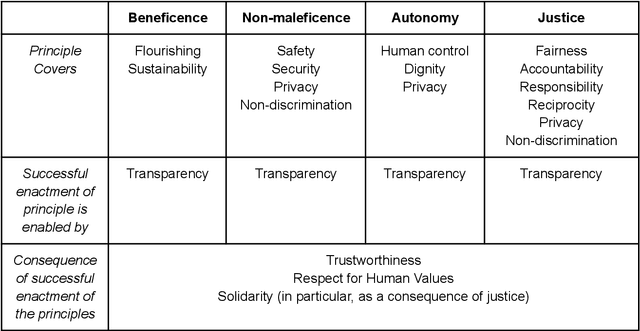

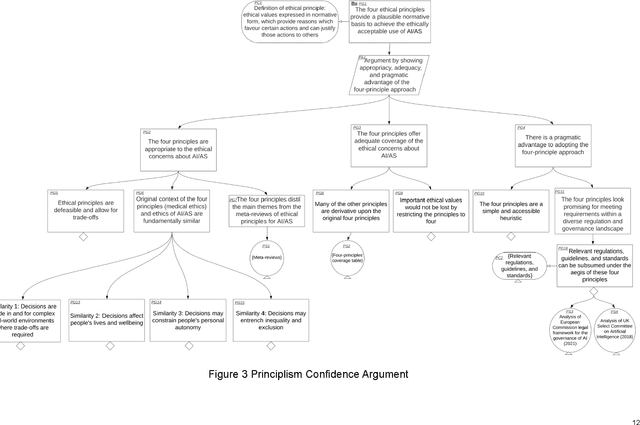

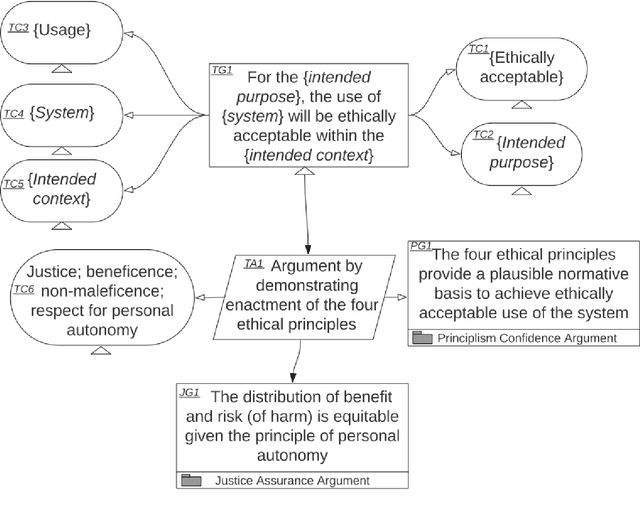

Abstract:An assurance case presents a clear and defensible argument, supported by evidence, that a system will operate as intended in a particular context. Assurance cases often inform third party certification of a system. One emerging proposal within the trustworthy AI and Autonomous Systems (AS) research community is to extend and apply the assurance case methodology to achieve justified confidence that a system will be ethically acceptable when used in a particular context. In this paper, we develop and further advance this proposal, in order to bring the idea of ethical assurance cases to life. First, we discuss the assurance case methodology and the Goal Structuring Notation (GSN), which is a graphical notation that is widely used to record and present assurance cases. Second, we describe four core ethical principles to guide the design and deployment of AI/AS: justice; beneficence; non-maleficence; and respect for personal autonomy. Third, we bring these two components together and structure an ethical assurance argument pattern - a reusable template for ethical assurance cases - on the basis of the four ethical principles. We call this a Principle-based Ethical Assurance Argument pattern. Throughout, we connect stages of the argument to examples of AI/AS applications and contexts. This helps to show the initial plausibility of the proposed methodology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge