Ziv Bar-Joseph

Recovering Time-Varying Networks From Single-Cell Data

Oct 01, 2024Abstract:Gene regulation is a dynamic process that underlies all aspects of human development, disease response, and other key biological processes. The reconstruction of temporal gene regulatory networks has conventionally relied on regression analysis, graphical models, or other types of relevance networks. With the large increase in time series single-cell data, new approaches are needed to address the unique scale and nature of this data for reconstructing such networks. Here, we develop a deep neural network, Marlene, to infer dynamic graphs from time series single-cell gene expression data. Marlene constructs directed gene networks using a self-attention mechanism where the weights evolve over time using recurrent units. By employing meta learning, the model is able to recover accurate temporal networks even for rare cell types. In addition, Marlene can identify gene interactions relevant to specific biological responses, including COVID-19 immune response, fibrosis, and aging.

Many-Shot In-Context Learning for Molecular Inverse Design

Jul 26, 2024Abstract:Large Language Models (LLMs) have demonstrated great performance in few-shot In-Context Learning (ICL) for a variety of generative and discriminative chemical design tasks. The newly expanded context windows of LLMs can further improve ICL capabilities for molecular inverse design and lead optimization. To take full advantage of these capabilities we developed a new semi-supervised learning method that overcomes the lack of experimental data available for many-shot ICL. Our approach involves iterative inclusion of LLM generated molecules with high predicted performance, along with experimental data. We further integrated our method in a multi-modal LLM which allows for the interactive modification of generated molecular structures using text instructions. As we show, the new method greatly improves upon existing ICL methods for molecular design while being accessible and easy to use for scientists.

Causal inference using deep neural networks

Nov 25, 2020

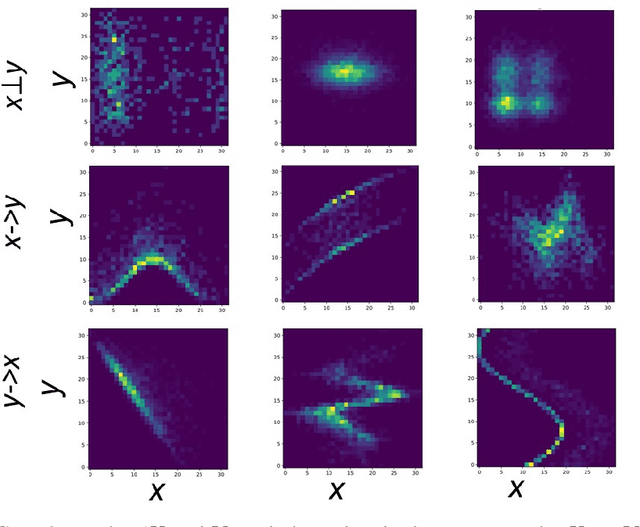

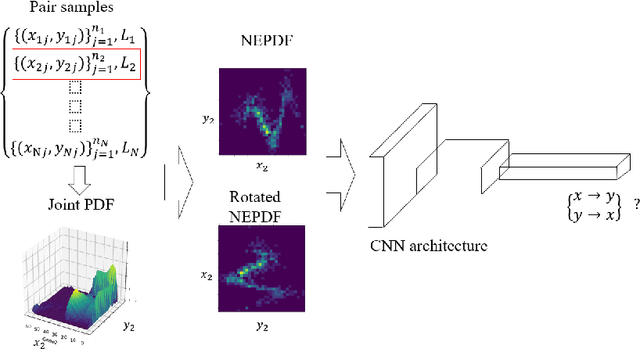

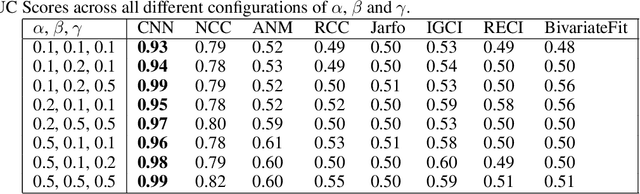

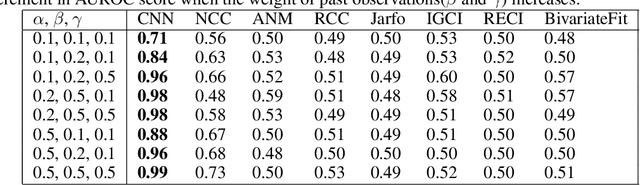

Abstract:Causal inference from observation data is a core problem in many scientific fields. Here we present a general supervised deep learning framework that infers causal interactions by transforming the input vectors to an image-like representation for every pair of inputs. Given a training dataset we first construct a normalized empirical probability density distribution (NEPDF) matrix. We then train a convolutional neural network (CNN) on NEPDFs for causality predictions. We tested the method on several different simulated and real world data and compared it to prior methods for causal inference. As we show, the method is general, can efficiently handle very large datasets and improves upon prior methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge