Zihou Xia

Organization and Understanding of a Tactile Information Dataset TacAct During Physical Human-Robot Interactions

Aug 11, 2021

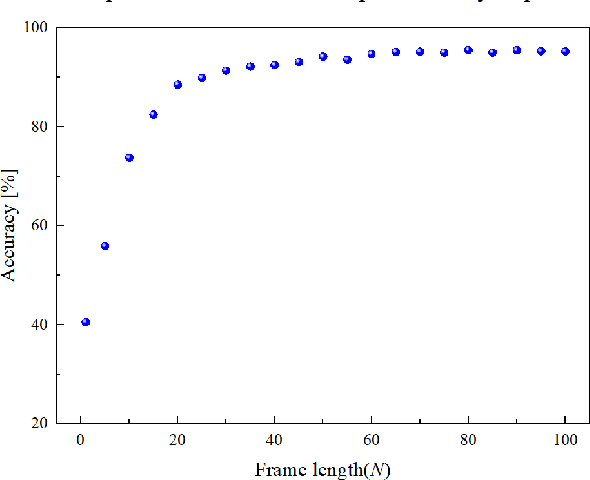

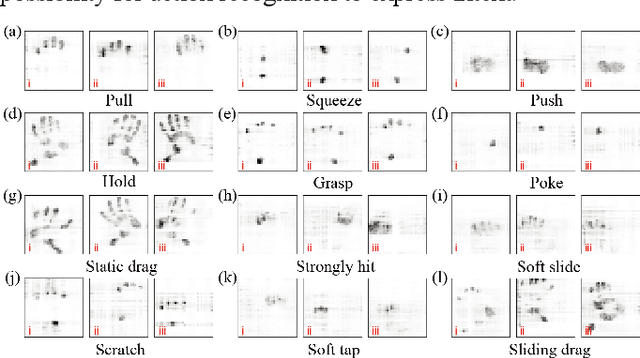

Abstract:Advanced service robots require superior tactile intelligence to guarantee human-contact safety and to provide essential supplements to visual and auditory information for human-robot interaction, especially when a robot is in physical contact with a human. Tactile intelligence is an essential capability of perception and recognition from tactile information, based on the learning from a large amount of tactile data and the understanding of the physical meaning behind the data. This report introduces a recently collected and organized dataset "TacAct" that encloses real-time pressure distribution when a human subject touches the arms of a nursing-care robot. The dataset consists of information from 50 subjects who performed a total of 24,000 touch actions. Furthermore, the details of the dataset are described, the data are preliminarily analyzed, and the validity of the collected information is tested through a convolutional neural network LeNet-5 classifying different types of touch actions. We believe that the TacAct dataset would be more than beneficial for the community of human interactive robots to understand the tactile profile under various circumstances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge