Yung-Hong Sun

C-DOG: Training-Free Multi-View Multi-Object Association in Dense Scenes Without Visual Feature via Connected δ-Overlap Graphs

Jul 18, 2025

Abstract:Multi-view multi-object association is a fundamental step in 3D reconstruction pipelines, enabling consistent grouping of object instances across multiple camera views. Existing methods often rely on appearance features or geometric constraints such as epipolar consistency. However, these approaches can fail when objects are visually indistinguishable or observations are corrupted by noise. We propose C-DOG, a training-free framework that serves as an intermediate module bridging object detection (or pose estimation) and 3D reconstruction, without relying on visual features. It combines connected delta-overlap graph modeling with epipolar geometry to robustly associate detections across views. Each 2D observation is represented as a graph node, with edges weighted by epipolar consistency. A delta-neighbor-overlap clustering step identifies strongly consistent groups while tolerating noise and partial connectivity. To further improve robustness, we incorporate Interquartile Range (IQR)-based filtering and a 3D back-projection error criterion to eliminate inconsistent observations. Extensive experiments on synthetic benchmarks demonstrate that C-DOG outperforms geometry-based baselines and remains robust under challenging conditions, including high object density, without visual features, and limited camera overlap, making it well-suited for scalable 3D reconstruction in real-world scenarios.

Sampling Strategies for Efficient Training of Deep Learning Object Detection Algorithms

May 23, 2025

Abstract:Two sampling strategies are investigated to enhance efficiency in training a deep learning object detection model. These sampling strategies are employed under the assumption of Lipschitz continuity of deep learning models. The first strategy is uniform sampling which seeks to obtain samples evenly yet randomly through the state space of the object dynamics. The second strategy of frame difference sampling is developed to explore the temporal redundancy among successive frames in a video. Experiment result indicates that these proposed sampling strategies provide a dataset that yields good training performance while requiring relatively few manually labelled samples.

EasyVis2: A Real Time Multi-view 3D Visualization for Laparoscopic Surgery Training Enhanced by a Deep Neural Network YOLOv8-Pose

Dec 21, 2024Abstract:EasyVis2 is a system designed for hands-free, real-time 3D visualization during laparoscopic surgery. It incorporates a surgical trocar equipped with a set of micro-cameras, which are inserted into the body cavity to provide an expanded field of view and a 3D perspective of the surgical procedure. A sophisticated deep neural network algorithm, YOLOv8-Pose, is tailored to estimate the position and orientation of surgical instruments in each individual camera view. Subsequently, 3D surgical tool pose estimation is performed using associated 2D key points across multiple views. This enables the rendering of a 3D surface model of the surgical tools overlaid on the observed background scene for real-time visualization. In this study, we explain the process of developing a training dataset for new surgical tools to customize YoLOv8-Pose while minimizing labeling efforts. Extensive experiments were conducted to compare EasyVis2 with the original EasyVis, revealing that, with the same number of cameras, the new system improves 3D reconstruction accuracy and reduces computation time. Additionally, experiments with 3D rendering on real animal tissue visually demonstrated the distance between surgical tools and tissues by displaying virtual side views, indicating potential applications in real surgeries in the future.

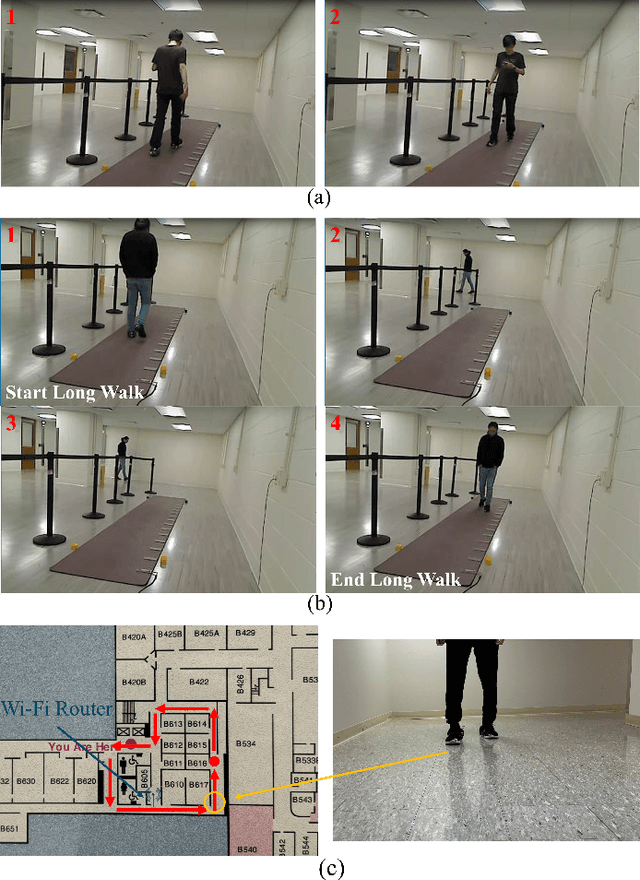

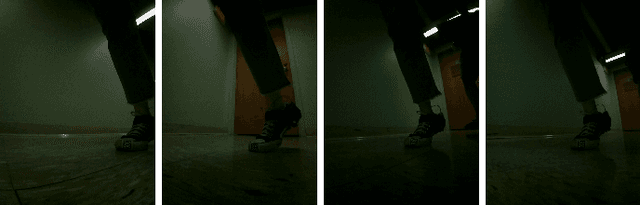

A Wearable Gait Monitoring System for 17 Gait Parameters Based on Computer Vision

Nov 16, 2024

Abstract:We developed a shoe-mounted gait monitoring system capable of tracking up to 17 gait parameters, including gait length, step time, stride velocity, and others. The system employs a stereo camera mounted on one shoe to track a marker placed on the opposite shoe, enabling the estimation of spatial gait parameters. Additionally, a Force Sensitive Resistor (FSR) affixed to the heel of the shoe, combined with a custom-designed algorithm, is utilized to measure temporal gait parameters. Through testing on multiple participants and comparison with the gait mat, the proposed gait monitoring system exhibited notable performance, with the accuracy of all measured gait parameters exceeding 93.61%. The system also demonstrated a low drift of 4.89% during long-distance walking. A gait identification task conducted on participants using a trained Transformer model achieved 95.7% accuracy on the dataset collected by the proposed system, demonstrating that our hardware has the potential to collect long-sequence gait data suitable for integration with current Large Language Models (LLMs). The system is cost-effective, user-friendly, and well-suited for real-life measurements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge