Yuki Nagai

Equivariant Transformer is all you need

Oct 20, 2023

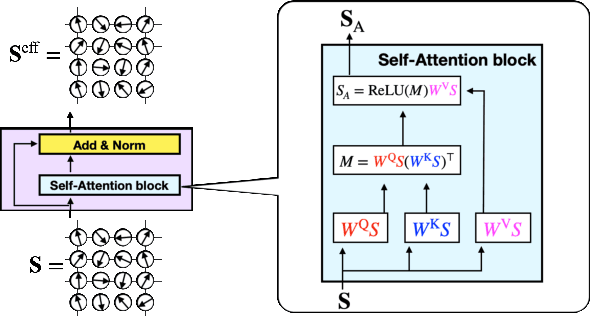

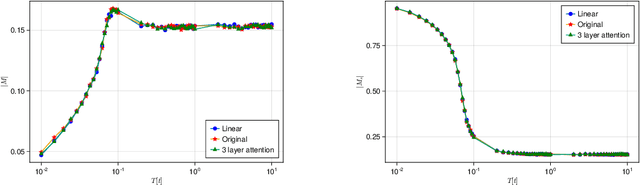

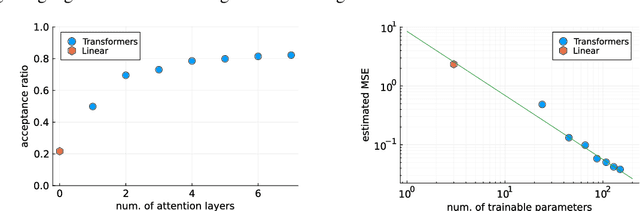

Abstract:Machine learning, deep learning, has been accelerating computational physics, which has been used to simulate systems on a lattice. Equivariance is essential to simulate a physical system because it imposes a strong induction bias for the probability distribution described by a machine learning model. This reduces the risk of erroneous extrapolation that deviates from data symmetries and physical laws. However, imposing symmetry on the model sometimes occur a poor acceptance rate in self-learning Monte-Carlo (SLMC). On the other hand, Attention used in Transformers like GPT realizes a large model capacity. We introduce symmetry equivariant attention to SLMC. To evaluate our architecture, we apply it to our proposed new architecture on a spin-fermion model on a two-dimensional lattice. We find that it overcomes poor acceptance rates for linear models and observe the scaling law of the acceptance rate as in the large language models with Transformers.

Digital Watermarking for Deep Neural Networks

Feb 06, 2018

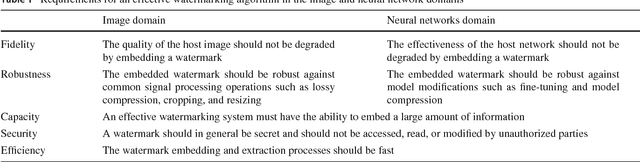

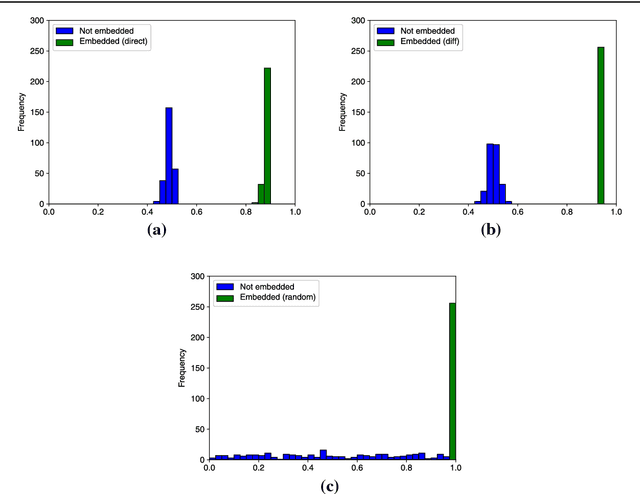

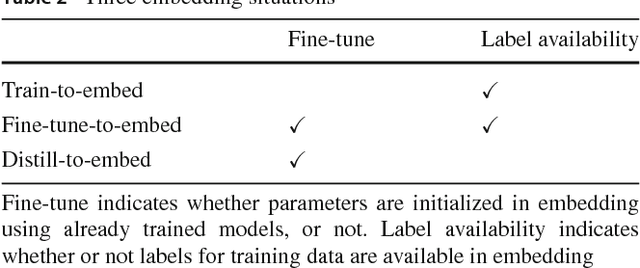

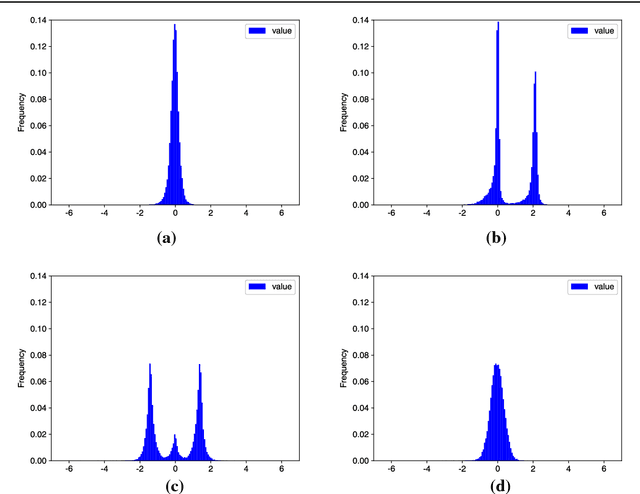

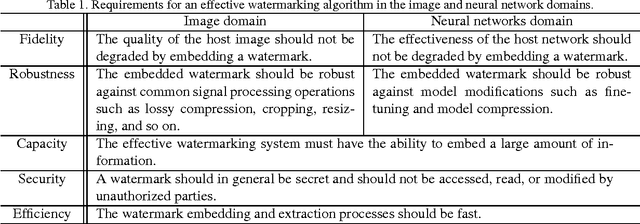

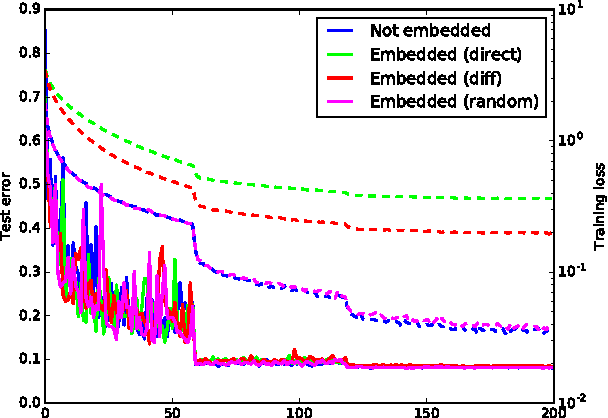

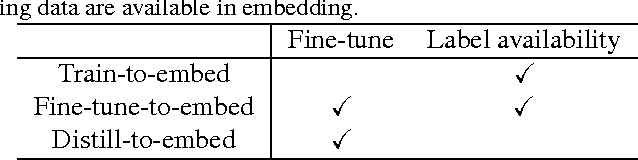

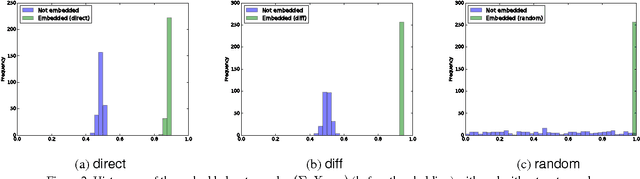

Abstract:Although deep neural networks have made tremendous progress in the area of multimedia representation, training neural models requires a large amount of data and time. It is well-known that utilizing trained models as initial weights often achieves lower training error than neural networks that are not pre-trained. A fine-tuning step helps to reduce both the computational cost and improve performance. Therefore, sharing trained models has been very important for the rapid progress of research and development. In addition, trained models could be important assets for the owner(s) who trained them, hence we regard trained models as intellectual property. In this paper, we propose a digital watermarking technology for ownership authorization of deep neural networks. First, we formulate a new problem: embedding watermarks into deep neural networks. We also define requirements, embedding situations, and attack types on watermarking in deep neural networks. Second, we propose a general framework for embedding a watermark in model parameters, using a parameter regularizer. Our approach does not impair the performance of networks into which a watermark is placed because the watermark is embedded while training the host network. Finally, we perform comprehensive experiments to reveal the potential of watermarking deep neural networks as the basis of this new research effort. We show that our framework can embed a watermark during the training of a deep neural network from scratch, and during fine-tuning and distilling, without impairing its performance. The embedded watermark does not disappear even after fine-tuning or parameter pruning; the watermark remains complete even after 65% of parameters are pruned.

Embedding Watermarks into Deep Neural Networks

Apr 20, 2017

Abstract:Deep neural networks have recently achieved significant progress. Sharing trained models of these deep neural networks is very important in the rapid progress of researching or developing deep neural network systems. At the same time, it is necessary to protect the rights of shared trained models. To this end, we propose to use a digital watermarking technology to protect intellectual property or detect intellectual property infringement of trained models. Firstly, we formulate a new problem: embedding watermarks into deep neural networks. We also define requirements, embedding situations, and attack types for watermarking to deep neural networks. Secondly, we propose a general framework to embed a watermark into model parameters using a parameter regularizer. Our approach does not hurt the performance of networks into which a watermark is embedded. Finally, we perform comprehensive experiments to reveal the potential of watermarking to deep neural networks as a basis of this new problem. We show that our framework can embed a watermark in the situations of training a network from scratch, fine-tuning, and distilling without hurting the performance of a deep neural network. The embedded watermark does not disappear even after fine-tuning or parameter pruning; the watermark completely remains even after removing 65% of parameters were pruned. The implementation of this research is: https://github.com/yu4u/dnn-watermark

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge