Yuchen Mu

SUR-FeatNet: Predicting the Satisfied User Ratio Curvefor Image Compression with Deep Feature Learning

Jan 07, 2020

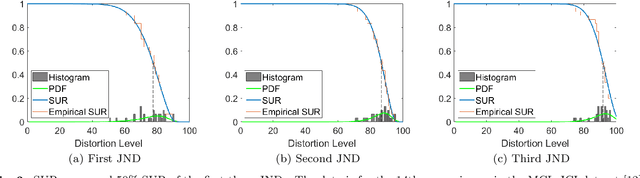

Abstract:The Satisfied User Ratio (SUR) curve for a lossy image compression scheme, e.g., JPEG, gives the distribution function of the Just Noticeable Difference (JND), the smallest distortion level that can be perceived by a subject when a reference image is compared to a distorted one. A sequence of JNDs can be defined with a suitable successive choice of reference images. We propose the first deep learning approach to predict SUR curves. We show how to exploit maximum likelihood estimation and the Kolmogorov-Smirnov test to select a suitable parametric model for the distribution function. We then use deep feature learning to predict samples of the SUR curve and apply the method of least squares to fit the parametric model to the predicted samples. Our deep learning approach relies on a Siamese Convolutional Neural Networks (CNN), transfer learning, and deep feature learning, using pairs consisting of a reference image and compressed image for training. Experiments on the MCL-JCI dataset showed state-of-the-art performance. For example, the mean Bhattacharyya distances between the predicted and ground truth first, second, and third JND distributions were 0.0810, 0.0702, and 0.0522, respectively, and the corresponding average absolute differences of the peak signal-to-noise ratio at the median of the distributions were 0.56, 0.65, and 0.53 dB.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge