Youshaa Murhij

OFMPNet: Deep End-to-End Model for Occupancy and Flow Prediction in Urban Environment

Apr 02, 2024Abstract:The task of motion prediction is pivotal for autonomous driving systems, providing crucial data to choose a vehicle behavior strategy within its surroundings. Existing motion prediction techniques primarily focus on predicting the future trajectory of each agent in the scene individually, utilizing its past trajectory data. In this paper, we introduce an end-to-end neural network methodology designed to predict the future behaviors of all dynamic objects in the environment. This approach leverages the occupancy map and the scene's motion flow. We are investigatin various alternatives for constructing a deep encoder-decoder model called OFMPNet. This model uses a sequence of bird's-eye-view road images, occupancy grid, and prior motion flow as input data. The encoder of the model can incorporate transformer, attention-based, or convolutional units. The decoder considers the use of both convolutional modules and recurrent blocks. Additionally, we propose a novel time-weighted motion flow loss, whose application has shown a substantial decrease in end-point error. Our approach has achieved state-of-the-art results on the Waymo Occupancy and Flow Prediction benchmark, with a Soft IoU of 52.1% and an AUC of 76.75% on Flow-Grounded Occupancy.

Rethinking Voxelization and Classification for 3D Object Detection

Jan 10, 2023

Abstract:The main challenge in 3D object detection from LiDAR point clouds is achieving real-time performance without affecting the reliability of the network. In other words, the detecting network must be confident enough about its predictions. In this paper, we present a solution to improve network inference speed and precision at the same time by implementing a fast dynamic voxelizer that works on fast pillar-based models in the same way a voxelizer works on slow voxel-based models. In addition, we propose a lightweight detection sub-head model for classifying predicted objects and filter out false detected objects that significantly improves model precision in a negligible time and computing cost. The developed code is publicly available at: https://github.com/YoushaaMurhij/RVCDet.

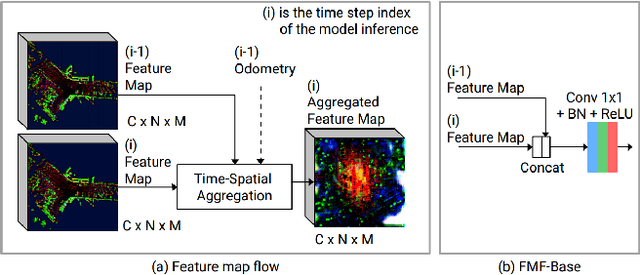

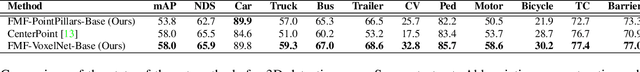

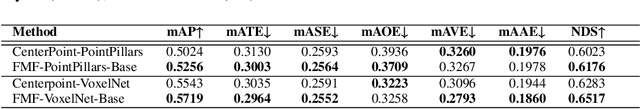

Real-time 3D Object Detection using Feature Map Flow

Jun 26, 2021

Abstract:In this paper, we present a real-time 3D detection approach considering time-spatial feature map aggregation from different time steps of deep neural model inference (named feature map flow, FMF). Proposed approach improves the quality of 3D detection center-based baseline and provides real-time performance on the nuScenes and Waymo benchmark. Code is available at https://github.com/YoushaaMurhij/FMFNet

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge