Yoav Ram

Near-perfect photo-ID of the Hula painted frog with zero-shot deep local-feature matching

Jan 13, 2026Abstract:Accurate individual identification is essential for monitoring rare amphibians, yet invasive marking is often unsuitable for critically endangered species. We evaluate state-of-the-art computer-vision methods for photographic re-identification of the Hula painted frog (Latonia nigriventer) using 1,233 ventral images from 191 individuals collected during 2013-2020 capture-recapture surveys. We compare deep local-feature matching in a zero-shot setting with deep global-feature embedding models. The local-feature pipeline achieves 98% top-1 closed-set identification accuracy, outperforming all global-feature models; fine-tuning improves the best global-feature model to 60% top-1 (91% top-10) but remains below local matching. To combine scalability with accuracy, we implement a two-stage workflow in which a fine-tuned global-feature model retrieves a short candidate list that is re-ranked by local-feature matching, reducing end-to-end runtime from 6.5-7.8 hours to ~38 minutes while maintaining ~96% top-1 closed-set accuracy on the labeled dataset. Separation of match scores between same- and different-individual pairs supports thresholding for open-set identification, enabling practical handling of novel individuals. We deploy this pipeline as a web application for routine field use, providing rapid, standardized, non-invasive identification to support conservation monitoring and capture-recapture analyses. Overall, in this species, zero-shot deep local-feature matching outperformed global-feature embedding and provides a strong default for photo-identification.

What makes a language easy to deep-learn?

Feb 23, 2023Abstract:Neural networks drive the success of natural language processing. A fundamental property of natural languages is their compositional structure, allowing us to describe new meanings systematically. However, neural networks notoriously struggle with systematic generalization and do not necessarily benefit from a compositional structure in emergent communication simulations. Here, we test how neural networks compare to humans in learning and generalizing a new language. We do this by closely replicating an artificial language learning study (conducted originally with human participants) and evaluating the memorization and generalization capabilities of deep neural networks with respect to the degree of structure in the input language. Our results show striking similarities between humans and deep neural networks: More structured linguistic input leads to more systematic generalization and better convergence between humans and neural network agents and between different neural agents. We then replicate this structure bias found in humans and our recurrent neural networks with a Transformer-based large language model (GPT-3), showing a similar benefit for structured linguistic input regarding generalization systematicity and memorization errors. These findings show that the underlying structure of languages is crucial for systematic generalization. Due to the correlation between community size and linguistic structure in natural languages, our findings underscore the challenge of automated processing of low-resource languages. Nevertheless, the similarity between humans and machines opens new avenues for language evolution research.

Emergent Communication for Understanding Human Language Evolution: What's Missing?

Apr 22, 2022

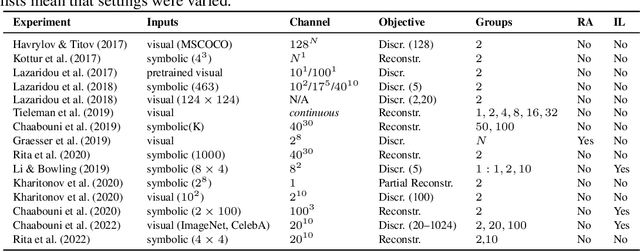

Abstract:Emergent communication protocols among humans and artificial neural network agents do not yet share the same properties and show some critical mismatches in results. We describe three important phenomena with respect to the emergence and benefits of compositionality: ease-of-learning, generalization, and group size effects (i.e., larger groups create more systematic languages). The latter two are not fully replicated with neural agents, which hinders the use of neural emergent communication for language evolution research. We argue that one possible reason for these mismatches is that key cognitive and communicative constraints of humans are not yet integrated. Specifically, in humans, memory constraints and the alternation between the roles of speaker and listener underlie the emergence of linguistic structure, yet these constraints are typically absent in neural simulations. We suggest that introducing such communicative and cognitive constraints would promote more linguistically plausible behaviors with neural agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge