Ying-Hong Chan

Improving Controllability of Educational Question Generation by Keyword Provision

Dec 02, 2021

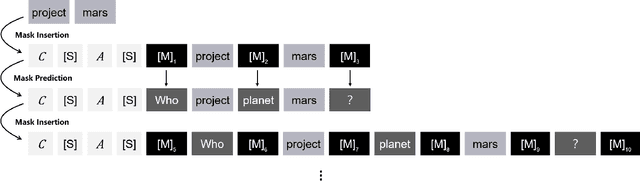

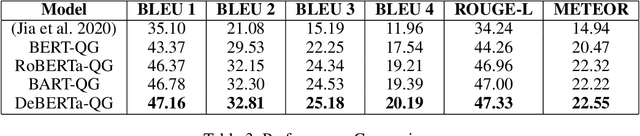

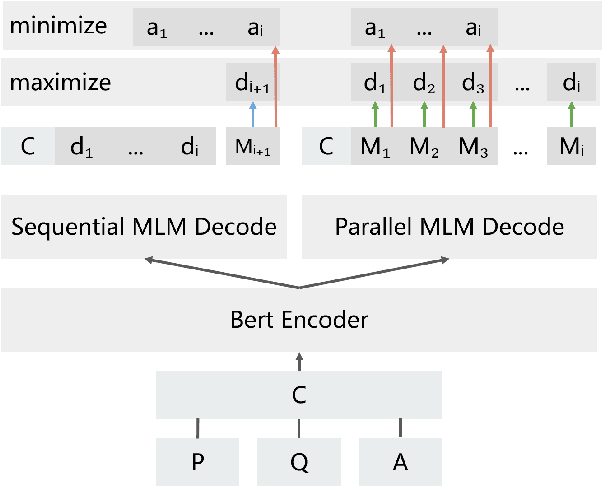

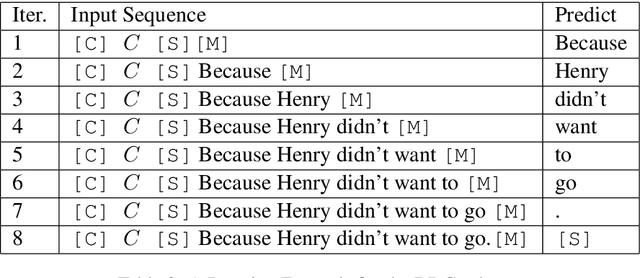

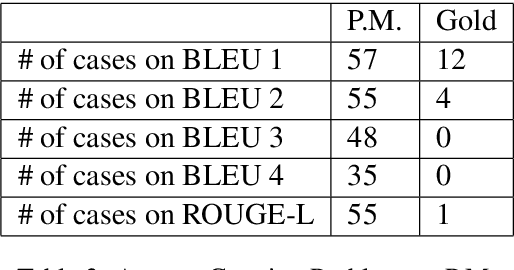

Abstract:Question Generation (QG) receives increasing research attention in NLP community. One motivation for QG is that QG significantly facilitates the preparation of educational reading practice and assessments. While the significant advancement of QG techniques was reported, current QG results are not ideal for educational reading practice assessment in terms of \textit{controllability} and \textit{question difficulty}. This paper reports our results toward the two issues. First, we report a state-of-the-art exam-like QG model by advancing the current best model from 11.96 to 20.19 (in terms of BLEU 4 score). Second, we propose to investigate a variant of QG setting by allowing users to provide keywords for guiding QG direction. We also present a simple but effective model toward the QG controllability task. Experiments are also performed and the results demonstrate the feasibility and potentials of improving QG diversity and controllability by the proposed keyword provision QG model.

A BERT-based Distractor Generation Scheme with Multi-tasking and Negative Answer Training Strategies

Oct 12, 2020

Abstract:In this paper, we investigate the following two limitations for the existing distractor generation (DG) methods. First, the quality of the existing DG methods are still far from practical use. There is still room for DG quality improvement. Second, the existing DG designs are mainly for single distractor generation. However, for practical MCQ preparation, multiple distractors are desired. Aiming at these goals, in this paper, we present a new distractor generation scheme with multi-tasking and negative answer training strategies for effectively generating \textit{multiple} distractors. The experimental results show that (1) our model advances the state-of-the-art result from 28.65 to 39.81 (BLEU 1 score) and (2) the generated multiple distractors are diverse and show strong distracting power for multiple choice question.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge