Yifu Yang

A Cross-Domain Few-Shot Learning Method Based on Domain Knowledge Mapping

Apr 09, 2025

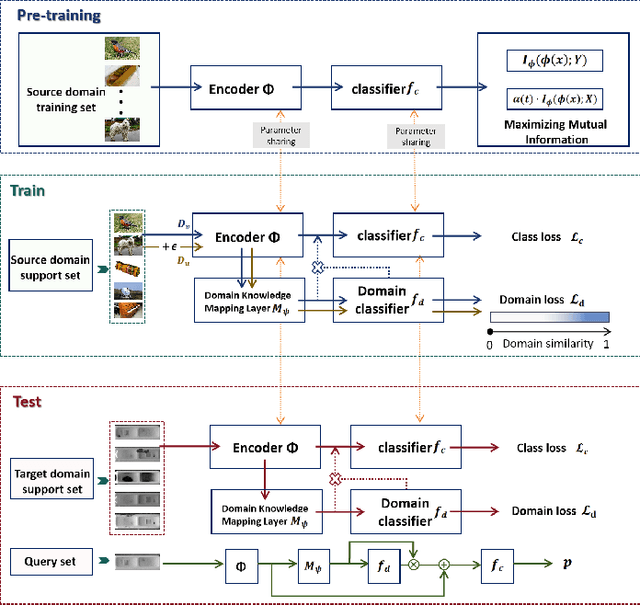

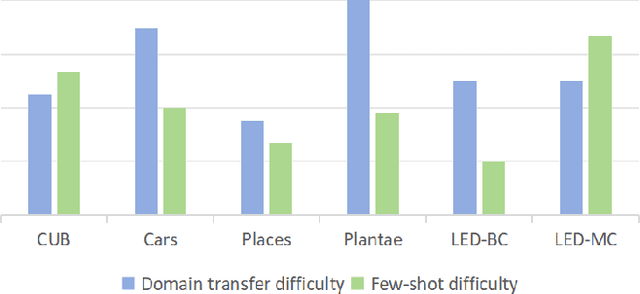

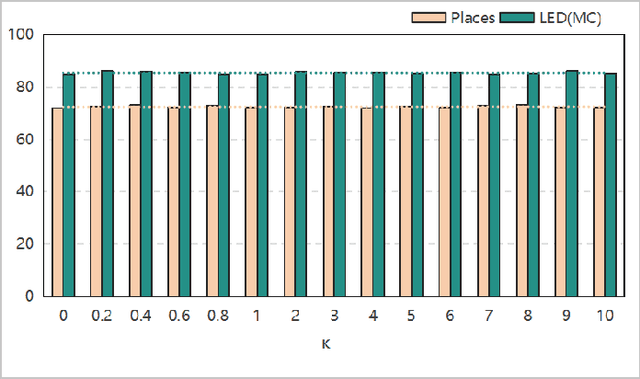

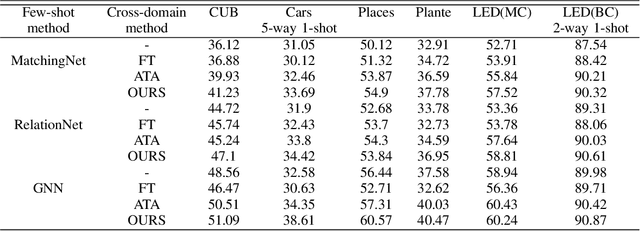

Abstract:In task-based few-shot learning paradigms, it is commonly assumed that different tasks are independently and identically distributed (i.i.d.). However, in real-world scenarios, the distribution encountered in few-shot learning can significantly differ from the distribution of existing data. Thus, how to effectively leverage existing data knowledge to enable models to quickly adapt to class variations under non-i.i.d. assumptions has emerged as a key research challenge. To address this challenge, this paper proposes a new cross-domain few-shot learning approach based on domain knowledge mapping, applied consistently throughout the pre-training, training, and testing phases. In the pre-training phase, our method integrates self-supervised and supervised losses by maximizing mutual information, thereby mitigating mode collapse. During the training phase, the domain knowledge mapping layer collaborates with a domain classifier to learn both domain mapping capabilities and the ability to assess domain adaptation difficulty. Finally, this approach is applied during the testing phase, rapidly adapting to domain variations through meta-training tasks on support sets, consequently enhancing the model's capability to transfer domain knowledge effectively. Experimental validation conducted across six datasets from diverse domains demonstrates the effectiveness of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge