Yehudit Aperstein

IRC-Bench: Recognizing Entities from Contextual Cues in First-Person Reminiscences

May 07, 2026Abstract:When people recount personal memories, they often refer to people, places, and events indirectly, relying on contextual cues rather than explicit names. Such implicit references are central to reminiscence narratives: first-person accounts of lived experience used in therapeutic, archival, and social settings. They pose a difficult computational problem because the intended entity must be inferred from dispersed narrative evidence rather than from a local mention. We introduce IRC-Bench, the Implicit Reminiscence Context Benchmark, for evaluating implicit entity recognition in reminiscence transcripts. The benchmark targets non-locality: entity-identifying cues are distributed across multiple, non-contiguous clauses, unlike named entity recognition, entity linking, or coreference resolution. IRC-Bench comprises 25,136 samples constructed from 12,337 Wiki-data-linked entities across 1,994 transcripts spanning 11 thematic domains. Each sample pairs an Entity-Grounded Narrative, in which the target entity is explicitly mentioned, with an Entity-Elided Narrative, in which direct mentions are removed. We evaluate 19 configurations across LLM generation, dense retrieval, RAG, and fine-tuning. QLoRA-adapted Llama 3.1 8B performs best in the open-world setting (38.94% exact match; 51.59% Jaccard), while fine-tuned DPR leads closed-world retrieval (35.38% Hit@1; 71.49% Hit@10). We release IRC-Bench with data, code, and evaluation tools.

Mapping License Plate Recoverability Under Extreme Viewing Angles for Oppor-tunistic Urban Sensing

Apr 26, 2026Abstract:Urban environments contain many imaging sensors built for specific purposes, including ATM, body-worn, CCTV, and dashboard cameras. Under the opportunistic sensing paradigm, these sensors can be repurposed for secondary inference tasks such as license plate recognition. Yet objects of interest in such imagery are often noisy, low-resolution, and captured from extreme viewpoints. Recent advances in AI-based restoration can recover use-ful information even from severely degraded images. A central challenge is determining which distortion parame-ters allow reliable recovery and which lead to inference failure. This paper introduces recoverability maps, a task-agnostic method for quantifying this boundary. The method combines a dense synthetic sweep of degrada-tion parameters with two summary measures: boundary area-under-curve, which estimates the recoverable frac-tion of the parameter space, and a reliability score, which captures the frequency and severity of failures within that region. We demonstrate the method on license plate recognition from highly angled views under realistic camera artifacts. Several restoration architectures are trained and evaluated, including U-Net, Restormer, Pix2Pix, and SR3 diffusion. The best model recovers about 93% of the parameter space. Similar results across models sug-gest that sensing geometry, rather than architecture, sets the limit of recovery.

LLM-guided headline rewriting for clickability enhancement without clickbait

Mar 23, 2026Abstract:Enhancing reader engagement while preserving informational fidelity is a central challenge in controllable text generation for news media. Optimizing news headlines for reader engagement is often conflated with clickbait, resulting in exaggerated or misleading phrasing that undermines editorial trust. We frame clickbait not as a separate stylistic category, but as an extreme outcome of disproportionate amplification of otherwise legitimate engagement cues. Based on this view, we formulate headline rewriting as a controllable generation problem, where specific engagement-oriented linguistic attributes are selectively strengthened under explicit constraints on semantic faithfulness and proportional emphasis. We present a guided headline rewriting framework built on a large language model (LLM) that uses the Future Discriminators for Generation (FUDGE) paradigm for inference-time control. The LLM is steered by two auxiliary guide models: (1) a clickbait scoring model that provides negative guidance to suppress excessive stylistic amplification, and (2) an engagement-attribute model that provides positive guidance aligned with target clickability objectives. Both guides are trained on neutral headlines drawn from a curated real-world news corpus. At the same time, clickbait variants are generated synthetically by rewriting these original headlines using an LLM under controlled activation of predefined engagement tactics. By adjusting guidance weights at inference time, the system generates headlines along a continuum from neutral paraphrases to more engaging yet editorially acceptable formulations. The proposed framework provides a principled approach for studying the trade-off between attractiveness, semantic preservation, and clickbait avoidance, and supports responsible LLM-based headline optimization in journalistic settings.

Recognition of Abnormal Events in Surveillance Videos using Weakly Supervised Dual-Encoder Models

Nov 17, 2025

Abstract:We address the challenge of detecting rare and diverse anomalies in surveillance videos using only video-level supervision. Our dual-backbone framework combines convolutional and transformer representations through top-k pooling, achieving 90.7% area under the curve (AUC) on the UCF-Crime dataset.

Reading Between the Lines: Classifying Resume Seniority with Large Language Models

Sep 11, 2025Abstract:Accurately assessing candidate seniority from resumes is a critical yet challenging task, complicated by the prevalence of overstated experience and ambiguous self-presentation. In this study, we investigate the effectiveness of large language models (LLMs), including fine-tuned BERT architectures, for automating seniority classification in resumes. To rigorously evaluate model performance, we introduce a hybrid dataset comprising both real-world resumes and synthetically generated hard examples designed to simulate exaggerated qualifications and understated seniority. Using the dataset, we evaluate the performance of Large Language Models in detecting subtle linguistic cues associated with seniority inflation and implicit expertise. Our findings highlight promising directions for enhancing AI-driven candidate evaluation systems and mitigating bias introduced by self-promotional language. The dataset is available for the research community at https://bit.ly/4mcTovt

Towards Knowledge-Aware Document Systems: Modeling Semantic Coverage Relations via Answerability Detection

Sep 10, 2025Abstract:Understanding how information is shared across documents, regardless of the format in which it is expressed, is critical for tasks such as information retrieval, summarization, and content alignment. In this work, we introduce a novel framework for modelling Semantic Coverage Relations (SCR), which classifies document pairs based on how their informational content aligns. We define three core relation types: equivalence, where both texts convey the same information using different textual forms or styles; inclusion, where one document fully contains the information of another and adds more; and semantic overlap, where each document presents partially overlapping content. To capture these relations, we adopt a question answering (QA)-based approach, using the answerability of shared questions across documents as an indicator of semantic coverage. We construct a synthetic dataset derived from the SQuAD corpus by paraphrasing source passages and selectively omitting information, enabling precise control over content overlap. This dataset allows us to benchmark generative language models and train transformer-based classifiers for SCR prediction. Our findings demonstrate that discriminative models significantly outperform generative approaches, with the RoBERTa-base model achieving the highest accuracy of 61.4% and the Random Forest-based model showing the best balance with a macro-F1 score of 52.9%. The results show that QA provides an effective lens for assessing semantic relations across stylistically diverse texts, offering insights into the capacity of current models to reason about information beyond surface similarity. The dataset and code developed in this study are publicly available to support reproducibility.

Boosted Training of Lightweight Early Exits for Optimizing CNN Image Classification Inference

Sep 10, 2025Abstract:Real-time image classification on resource-constrained platforms demands inference methods that balance accuracy with strict latency and power budgets. Early-exit strategies address this need by attaching auxiliary classifiers to intermediate layers of convolutional neural networks (CNNs), allowing "easy" samples to terminate inference early. However, conventional training of early exits introduces a covariance shift: downstream branches are trained on full datasets, while at inference they process only the harder, non-exited samples. This mismatch limits efficiency--accuracy trade-offs in practice. We introduce the Boosted Training Scheme for Early Exits (BTS-EE), a sequential training approach that aligns branch training with inference-time data distributions. Each branch is trained and calibrated before the next, ensuring robustness under selective inference conditions. To further support embedded deployment, we propose a lightweight branch architecture based on 1D convolutions and a Class Precision Margin (CPM) calibration method that enables per-class threshold tuning for reliable exit decisions. Experiments on the CINIC-10 dataset with a ResNet18 backbone demonstrate that BTS-EE consistently outperforms non-boosted training across 64 configurations, achieving up to 45 percent reduction in computation with only 2 percent accuracy degradation. These results expand the design space for deploying CNNs in real-time image processing systems, offering practical efficiency gains for applications such as industrial inspection, embedded vision, and UAV-based monitoring.

Do Large Language Models Need Intent? Revisiting Response Generation Strategies for Service Assistant

Sep 05, 2025Abstract:In the era of conversational AI, generating accurate and contextually appropriate service responses remains a critical challenge. A central question remains: Is explicit intent recognition a prerequisite for generating high-quality service responses, or can models bypass this step and produce effective replies directly? This paper conducts a rigorous comparative study to address this fundamental design dilemma. Leveraging two publicly available service interaction datasets, we benchmark several state-of-the-art language models, including a fine-tuned T5 variant, across both paradigms: Intent-First Response Generation and Direct Response Generation. Evaluation metrics encompass both linguistic quality and task success rates, revealing surprising insights into the necessity or redundancy of explicit intent modelling. Our findings challenge conventional assumptions in conversational AI pipelines, offering actionable guidelines for designing more efficient and effective response generation systems.

Code Review Without Borders: Evaluating Synthetic vs. Real Data for Review Recommendation

Sep 05, 2025

Abstract:Automating the decision of whether a code change requires manual review is vital for maintaining software quality in modern development workflows. However, the emergence of new programming languages and frameworks creates a critical bottleneck: while large volumes of unlabelled code are readily available, there is an insufficient amount of labelled data to train supervised models for review classification. We address this challenge by leveraging Large Language Models (LLMs) to translate code changes from well-resourced languages into equivalent changes in underrepresented or emerging languages, generating synthetic training data where labelled examples are scarce. We assume that although LLMs have learned the syntax and semantics of new languages from available unlabelled code, they have yet to fully grasp which code changes are considered significant or review-worthy within these emerging ecosystems. To overcome this, we use LLMs to generate synthetic change examples and train supervised classifiers on them. We systematically compare the performance of these classifiers against models trained on real labelled data. Our experiments across multiple GitHub repositories and language pairs demonstrate that LLM-generated synthetic data can effectively bootstrap review recommendation systems, narrowing the performance gap even in low-resource settings. This approach provides a scalable pathway to extend automated code review capabilities to rapidly evolving technology stacks, even in the absence of annotated data.

Stitching the Story: Creating Panoramic Incident Summaries from Body-Worn Footage

Sep 04, 2025

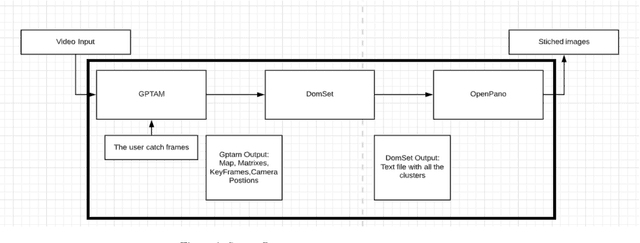

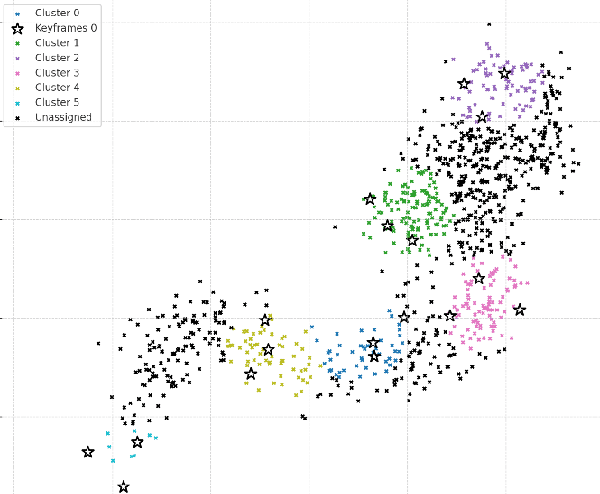

Abstract:First responders widely adopt body-worn cameras to document incident scenes and support post-event analysis. However, reviewing lengthy video footage is impractical in time-critical situations. Effective situational awareness demands a concise visual summary that can be quickly interpreted. This work presents a computer vision pipeline that transforms body-camera footage into informative panoramic images summarizing the incident scene. Our method leverages monocular Simultaneous Localization and Mapping (SLAM) to estimate camera trajectories and reconstruct the spatial layout of the environment. Key viewpoints are identified by clustering camera poses along the trajectory, and representative frames from each cluster are selected. These frames are fused into spatially coherent panoramic images using multi-frame stitching techniques. The resulting summaries enable rapid understanding of complex environments and facilitate efficient decision-making and incident review.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge