Xinyang Pu

Enhancing SAR Object Detection with Self-Supervised Pre-training on Masked Auto-Encoders

Jan 20, 2025

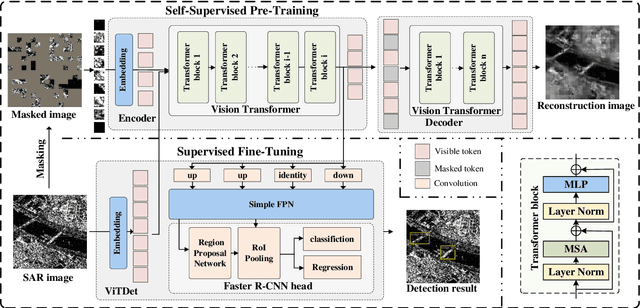

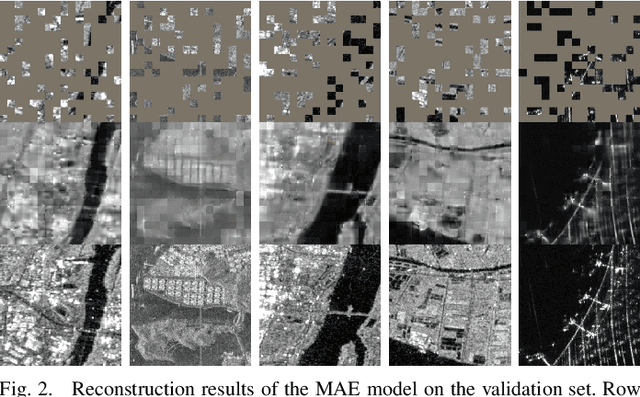

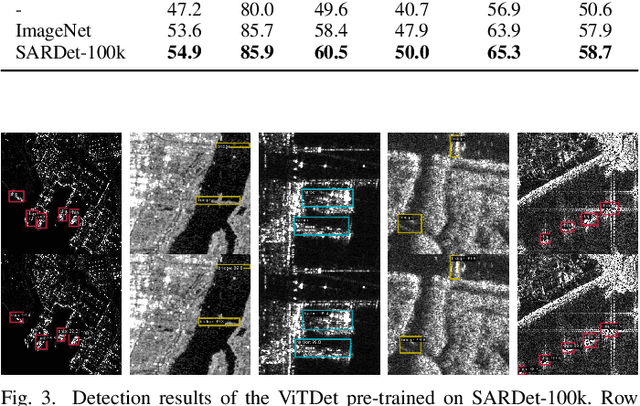

Abstract:Supervised fine-tuning methods (SFT) perform great efficiency on artificial intelligence interpretation in SAR images, leveraging the powerful representation knowledge from pre-training models. Due to the lack of domain-specific pre-trained backbones in SAR images, the traditional strategies are loading the foundation pre-train models of natural scenes such as ImageNet, whose characteristics of images are extremely different from SAR images. This may hinder the model performance on downstream tasks when adopting SFT on small-scale annotated SAR data. In this paper, an self-supervised learning (SSL) method of masked image modeling based on Masked Auto-Encoders (MAE) is proposed to learn feature representations of SAR images during the pre-training process and benefit the object detection task in SAR images of SFT. The evaluation experiments on the large-scale SAR object detection benchmark named SARDet-100k verify that the proposed method captures proper latent representations of SAR images and improves the model generalization in downstream tasks by converting the pre-trained domain from natural scenes to SAR images through SSL. The proposed method achieves an improvement of 1.3 mAP on the SARDet-100k benchmark compared to only the SFT strategies.

Tuning a SAM-Based Model with Multi-Cognitive Visual Adapter to Remote Sensing Instance Segmentation

Aug 16, 2024

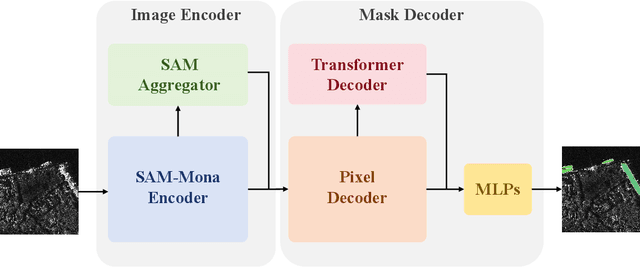

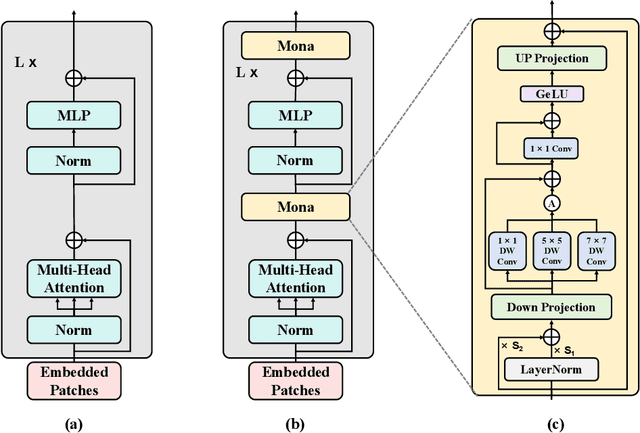

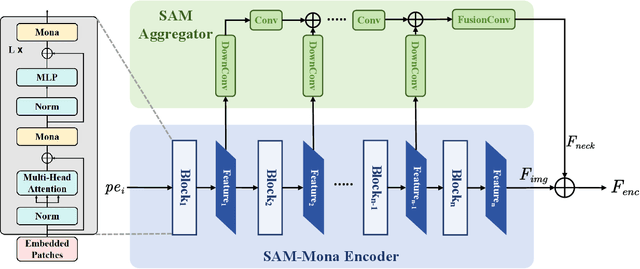

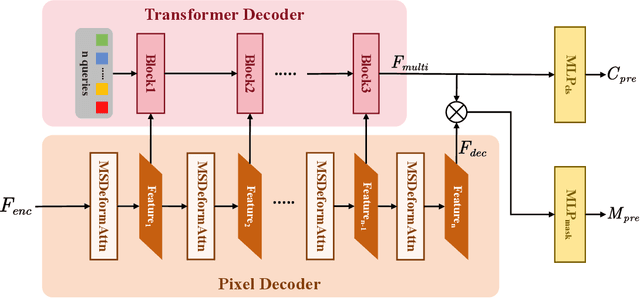

Abstract:The Segment Anything Model (SAM), a foundational model designed for promptable segmentation tasks, demonstrates exceptional generalization capabilities, making it highly promising for natural scene image segmentation. However, SAM's lack of pretraining on massive remote sensing images and its interactive structure limit its automatic mask prediction capabilities. In this paper, a Multi-Cognitive SAM-Based Instance Segmentation Model (MC-SAM SEG) is introduced to employ SAM on remote sensing domain. The SAM-Mona encoder utilizing the Multi-cognitive Visual Adapter (Mona) is conducted to facilitate SAM's transfer learning in remote sensing applications. The proposed method named MC-SAM SEG extracts high-quality features by fine-tuning the SAM-Mona encoder along with a feature aggregator. Subsequently, a pixel decoder and transformer decoder are designed for prompt-free mask generation and instance classification. The comprehensive experiments are conducted on the HRSID and WHU datasets for instance segmentation tasks on Synthetic Aperture Radar (SAR) images and optical remote sensing images respectively. The evaluation results indicate the proposed method surpasses other deep learning algorithms and verify its effectiveness and generalization.

Low-Rank Adaption on Transformer-based Oriented Object Detector for Satellite Onboard Processing of Remote Sensing Images

Jun 04, 2024Abstract:Deep learning models in satellite onboard enable real-time interpretation of remote sensing images, reducing the need for data transmission to the ground and conserving communication resources. As satellite numbers and observation frequencies increase, the demand for satellite onboard real-time image interpretation grows, highlighting the expanding importance and development of this technology. However, updating the extensive parameters of models deployed on the satellites for spaceborne object detection model is challenging due to the limitations of uplink bandwidth in wireless satellite communications. To address this issue, this paper proposes a method based on parameter-efficient fine-tuning technology with low-rank adaptation (LoRA) module. It involves training low-rank matrix parameters and integrating them with the original model's weight matrix through multiplication and summation, thereby fine-tuning the model parameters to adapt to new data distributions with minimal weight updates. The proposed method combines parameter-efficient fine-tuning with full fine-tuning in the parameter update strategy of the oriented object detection algorithm architecture. This strategy enables model performance improvements close to full fine-tuning effects with minimal parameter updates. In addition, low rank approximation is conducted to pick an optimal rank value for LoRA matrices. Extensive experiments verify the effectiveness of the proposed method. By fine-tuning and updating only 12.4$\%$ of the model's total parameters, it is able to achieve 97$\%$ to 100$\%$ of the performance of full fine-tuning models. Additionally, the reduced number of trainable parameters accelerates model training iterations and enhances the generalization and robustness of the oriented object detection model. The source code is available at: \url{https://github.com/fudanxu/LoRA-Det}.

ClassWise-SAM-Adapter: Parameter Efficient Fine-tuning Adapts Segment Anything to SAR Domain for Semantic Segmentation

Jan 04, 2024Abstract:In the realm of artificial intelligence, the emergence of foundation models, backed by high computing capabilities and extensive data, has been revolutionary. Segment Anything Model (SAM), built on the Vision Transformer (ViT) model with millions of parameters and vast training dataset SA-1B, excels in various segmentation scenarios relying on its significance of semantic information and generalization ability. Such achievement of visual foundation model stimulates continuous researches on specific downstream tasks in computer vision. The ClassWise-SAM-Adapter (CWSAM) is designed to adapt the high-performing SAM for landcover classification on space-borne Synthetic Aperture Radar (SAR) images. The proposed CWSAM freezes most of SAM's parameters and incorporates lightweight adapters for parameter efficient fine-tuning, and a classwise mask decoder is designed to achieve semantic segmentation task. This adapt-tuning method allows for efficient landcover classification of SAR images, balancing the accuracy with computational demand. In addition, the task specific input module injects low frequency information of SAR images by MLP-based layers to improve the model performance. Compared to conventional state-of-the-art semantic segmentation algorithms by extensive experiments, CWSAM showcases enhanced performance with fewer computing resources, highlighting the potential of leveraging foundational models like SAM for specific downstream tasks in the SAR domain. The source code is available at: https://github.com/xypu98/CWSAM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge