Wen-Pu Cai

LCQ: Low-Rank Codebook based Quantization for Large Language Models

May 31, 2024Abstract:Large language models~(LLMs) have recently demonstrated promising performance in many tasks. However, the high storage and computational cost of LLMs has become a challenge for deploying LLMs. Weight quantization has been widely used for model compression, which can reduce both storage and computational cost. Most existing weight quantization methods for LLMs use a rank-one codebook for quantization, which results in substantial accuracy loss when the compression ratio is high. In this paper, we propose a novel weight quantization method, called low-rank codebook based quantization~(LCQ), for LLMs. LCQ adopts a low-rank codebook, the rank of which can be larger than one, for quantization. Experiments show that LCQ can achieve better accuracy than existing methods with a negligibly extra storage cost.

Weight Normalization based Quantization for Deep Neural Network Compression

Jul 01, 2019

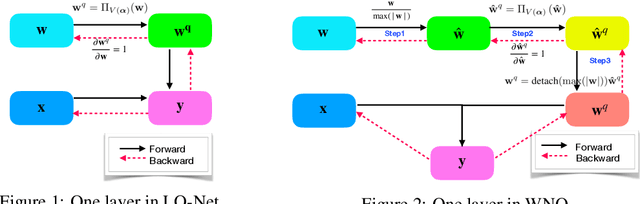

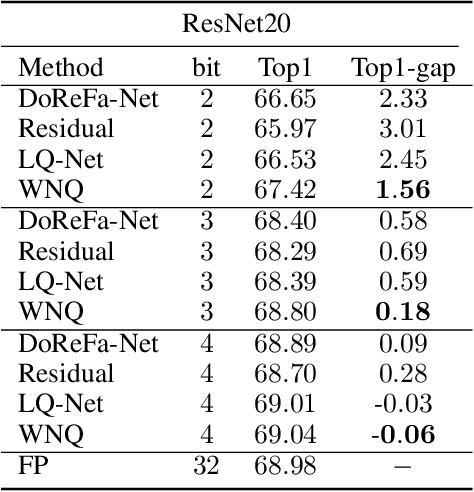

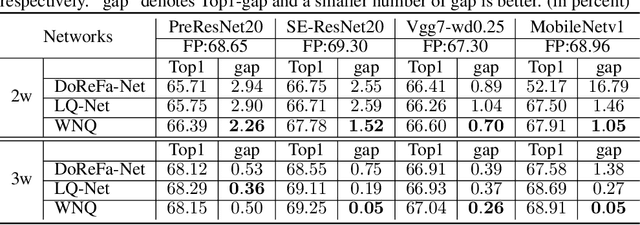

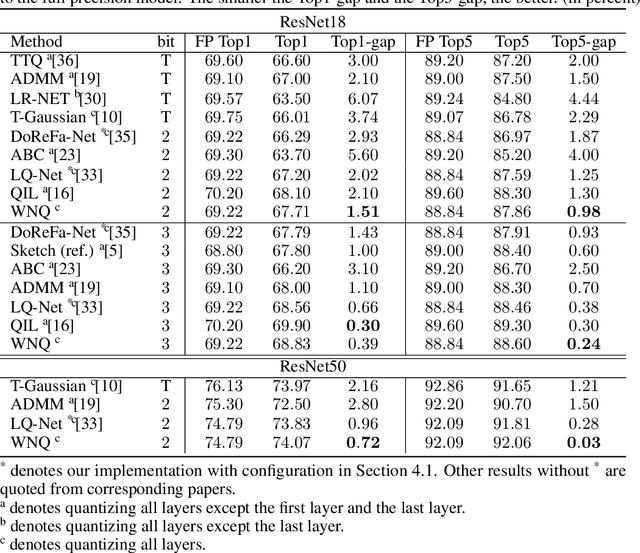

Abstract:With the development of deep neural networks, the size of network models becomes larger and larger. Model compression has become an urgent need for deploying these network models to mobile or embedded devices. Model quantization is a representative model compression technique. Although a lot of quantization methods have been proposed, many of them suffer from a high quantization error caused by a long-tail distribution of network weights. In this paper, we propose a novel quantization method, called weight normalization based quantization (WNQ), for model compression. WNQ adopts weight normalization to avoid the long-tail distribution of network weights and subsequently reduces the quantization error. Experiments on CIFAR-100 and ImageNet show that WNQ can outperform other baselines to achieve state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge