Walter Fichter

Virtual Target Trajectory Prediction for Stochastic Targets

Apr 02, 2025

Abstract:Trajectory prediction of other vehicles is crucial for autonomous vehicles, with applications from missile guidance to UAV collision avoidance. Typically, target trajectories are assumed deterministic, but real-world aerial vehicles exhibit stochastic behavior, such as evasive maneuvers or gliders circling in thermals. This paper uses Conditional Normalizing Flows, an unsupervised Machine Learning technique, to learn and predict the stochastic behavior of targets of guided missiles using trajectory data. The trained model predicts the distribution of future target positions based on initial conditions and parameters of the dynamics. Samples from this distribution are clustered using a time series k-means algorithm to generate representative trajectories, termed virtual targets. The method is fast and target-agnostic, requiring only training data in the form of target trajectories. Thus, it serves as a drop-in replacement for deterministic trajectory predictions in guidance laws and path planning. Simulated scenarios demonstrate the approach's effectiveness for aerial vehicles with random maneuvers, bridging the gap between deterministic predictions and stochastic reality, advancing guidance and control algorithms for autonomous vehicles.

Long Short-Term Memory for Spatial Encoding in Multi-Agent Path Planning

Mar 21, 2022

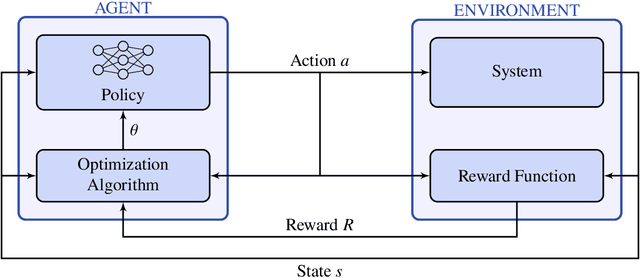

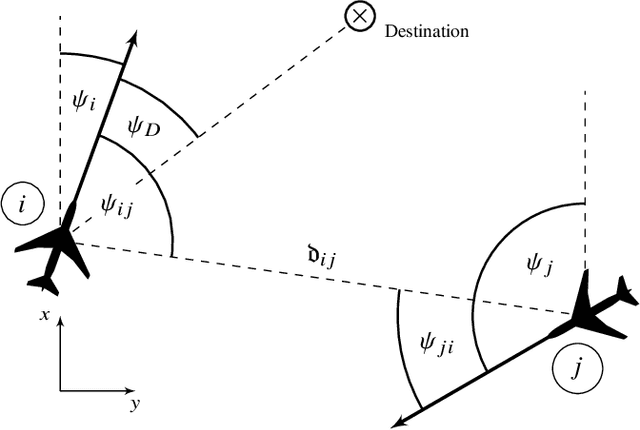

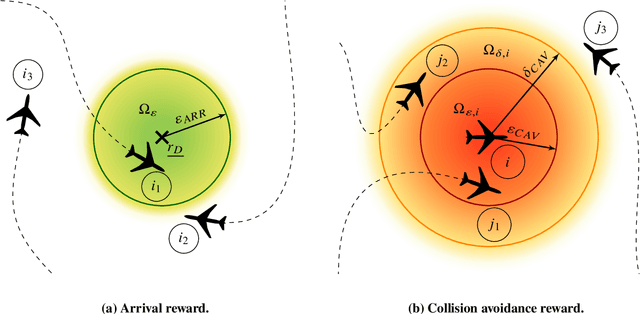

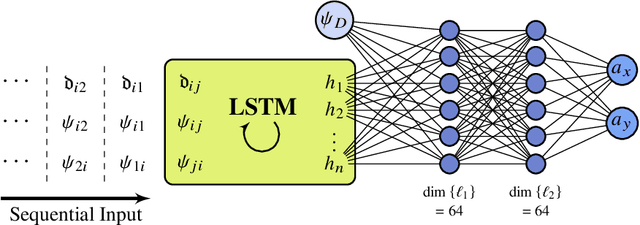

Abstract:Reinforcement learning-based path planning for multi-agent systems of varying size constitutes a research topic with increasing significance as progress in domains such as urban air mobility and autonomous aerial vehicles continues. Reinforcement learning with continuous state and action spaces is used to train a policy network that accommodates desirable path planning behaviors and can be used for time-critical applications. A Long Short-Term Memory module is proposed to encode an unspecified number of states for a varying, indefinite number of agents. The described training strategies and policy architecture lead to a guidance that scales to an infinite number of agents and unlimited physical dimensions, although training takes place at a smaller scale. The guidance is implemented on a low-cost, off-the-shelf onboard computer. The feasibility of the proposed approach is validated by presenting flight test results of up to four drones, autonomously navigating collision-free in a real-world environment.

* For associated source code, see https://github.com/MarcSchlichting/LSTMSpatialEncoding , For associated video of flight test, see https://schlichting.page.link/lstm_flight_test , 17 pages, 11 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge