Vladimir Lucic

Backdoor Attack and Defense for Deep Regression

Sep 06, 2021

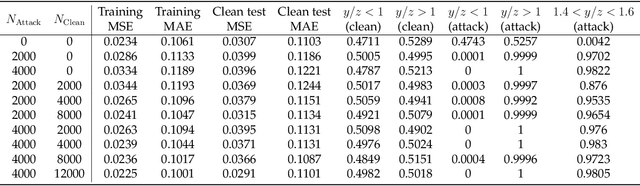

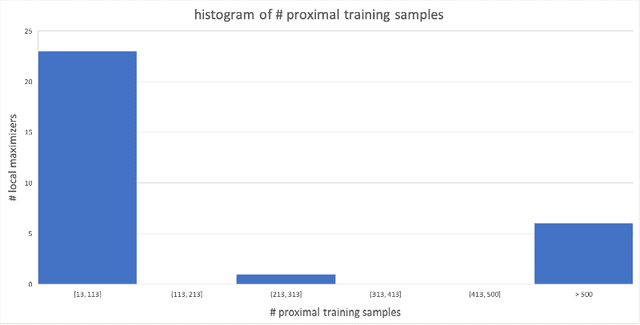

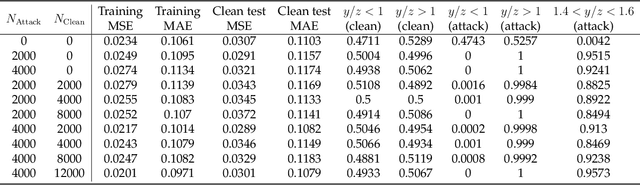

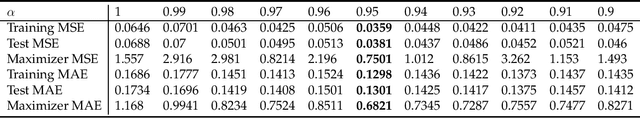

Abstract:We demonstrate a backdoor attack on a deep neural network used for regression. The backdoor attack is localized based on training-set data poisoning wherein the mislabeled samples are surrounded by correctly labeled ones. We demonstrate how such localization is necessary for attack success. We also study the performance of a backdoor defense using gradient-based discovery of local error maximizers. Local error maximizers which are associated with significant (interpolation) error, and are proximal to many training samples, are suspicious. This method is also used to accurately train for deep regression in the first place by active (deep) learning leveraging an "oracle" capable of providing real-valued supervision (a regression target) for samples. Such oracles, including traditional numerical solvers of PDEs or SDEs using finite difference or Monte Carlo approximations, are far more computationally costly compared to deep regression.

Robust and Active Learning for Deep Neural Network Regression

Jul 28, 2021

Abstract:We describe a gradient-based method to discover local error maximizers of a deep neural network (DNN) used for regression, assuming the availability of an "oracle" capable of providing real-valued supervision (a regression target) for samples. For example, the oracle could be a numerical solver which, operationally, is much slower than the DNN. Given a discovered set of local error maximizers, the DNN is either fine-tuned or retrained in the manner of active learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge