Victor Zuanazzi

Do not trust the neighbors! Adversarial Metric Learning for Self-Supervised Scene Flow Estimation

Nov 01, 2020

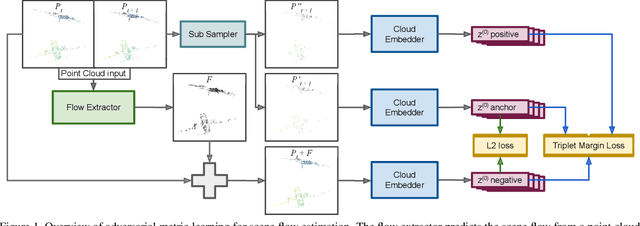

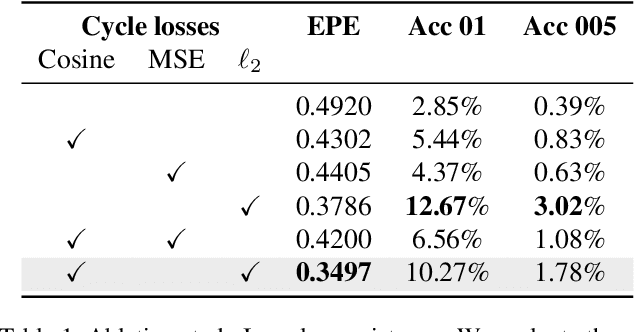

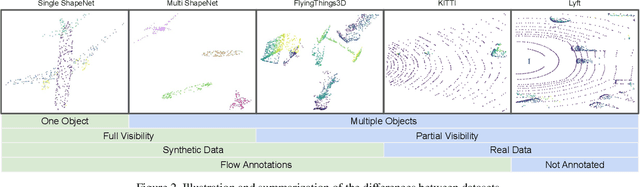

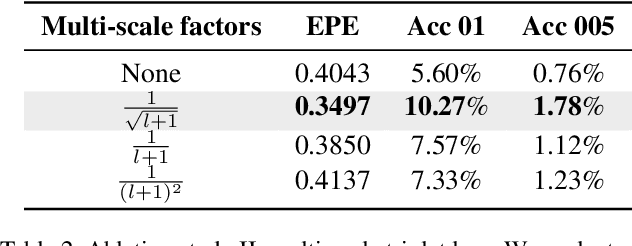

Abstract:Scene flow is the task of estimating 3D motion vectors to individual points of a dynamic 3D scene. Motion vectors have shown to be beneficial for downstream tasks such as action classification and collision avoidance. However, data collected via LiDAR sensors and stereo cameras are computation and labor intensive to precisely annotate for scene flow. We address this annotation bottleneck on two ends. We propose a 3D scene flow benchmark and a novel self-supervised setup for training flow models. The benchmark consists of datasets designed to study individual aspects of flow estimation in progressive order of complexity, from a single object in motion to real-world scenes. Furthermore, we introduce Adversarial Metric Learning for self-supervised flow estimation. The flow model is fed with sequences of point clouds to perform flow estimation. A second model learns a latent metric to distinguish between the points translated by the flow estimations and the target point cloud. This latent metric is learned via a Multi-Scale Triplet loss, which uses intermediary feature vectors for the loss calculation. We use our proposed benchmark to draw insights about the performance of the baselines and of different models when trained using our setup. We find that our setup is able to keep motion coherence and preserve local geometries, which many self-supervised baselines fail to grasp. Dealing with occlusions, on the other hand, is still an open challenge.

Adversarial Self-Supervised Scene Flow Estimation

Nov 01, 2020

Abstract:This work proposes a metric learning approach for self-supervised scene flow estimation. Scene flow estimation is the task of estimating 3D flow vectors for consecutive 3D point clouds. Such flow vectors are fruitful, \eg for recognizing actions, or avoiding collisions. Training a neural network via supervised learning for scene flow is impractical, as this requires manual annotations for each 3D point at each new timestamp for each scene. To that end, we seek for a self-supervised approach, where a network learns a latent metric to distinguish between points translated by flow estimations and the target point cloud. Our adversarial metric learning includes a multi-scale triplet loss on sequences of two-point clouds as well as a cycle consistency loss. Furthermore, we outline a benchmark for self-supervised scene flow estimation: the Scene Flow Sandbox. The benchmark consists of five datasets designed to study individual aspects of flow estimation in progressive order of complexity, from a moving object to real-world scenes. Experimental evaluation on the benchmark shows that our approach obtains state-of-the-art self-supervised scene flow results, outperforming recent neighbor-based approaches. We use our proposed benchmark to expose shortcomings and draw insights on various training setups. We find that our setup captures motion coherence and preserves local geometries. Dealing with occlusions, on the other hand, is still an open challenge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge