U. V. B. L. Udugama

M2H-MX: Multi-Task Dense Visual Perception for Real-Time Monocular Spatial Understanding

Mar 31, 2026Abstract:Monocular cameras are attractive for robotic perception due to their low cost and ease of deployment, yet achieving reliable real-time spatial understanding from a single image stream remains challenging. While recent multi-task dense prediction models have improved per-pixel depth and semantic estimation, translating these advances into stable monocular mapping systems is still non-trivial. This paper presents M2H-MX, a real-time multi-task perception model for monocular spatial understanding. The model preserves multi-scale feature representations while introducing register-gated global context and controlled cross-task interaction in a lightweight decoder, enabling depth and semantic predictions to reinforce each other under strict latency constraints. Its outputs integrate directly into an unmodified monocular SLAM pipeline through a compact perception-to-mapping interface. We evaluate both dense prediction accuracy and in-the-loop system performance. On NYUDv2, M2H-MX-L achieves state-of-the-art results, improving semantic mIoU by 6.6% and reducing depth RMSE by 9.4% over representative multi-task baselines. When deployed in a real-time monocular mapping system on ScanNet, M2H-MX reduces average trajectory error by 60.7% compared to a strong monocular SLAM baseline while producing cleaner metric-semantic maps. These results demonstrate that modern multi-task dense prediction can be reliably deployed for real-time monocular spatial perception in robotic systems.

Mono-hydra: Real-time 3D scene graph construction from monocular camera input with IMU

Aug 10, 2023

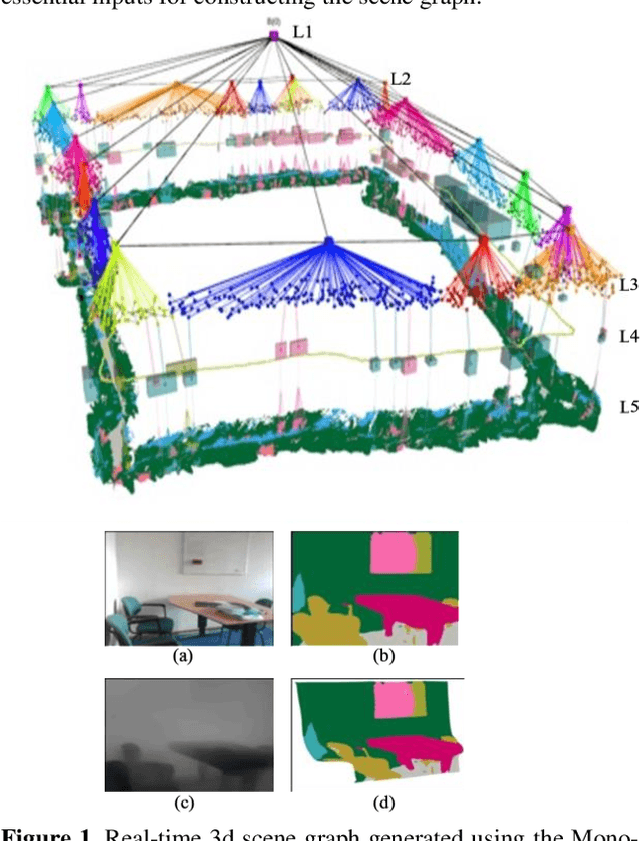

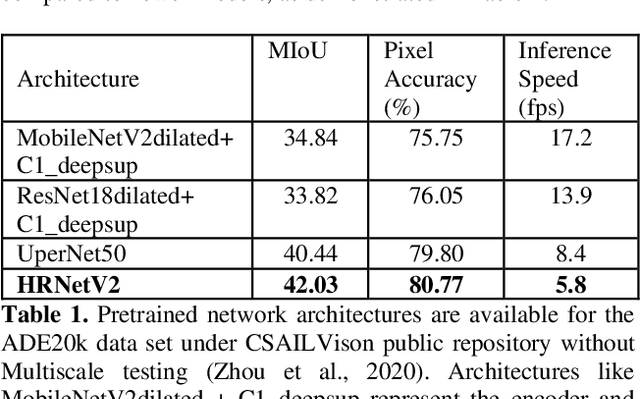

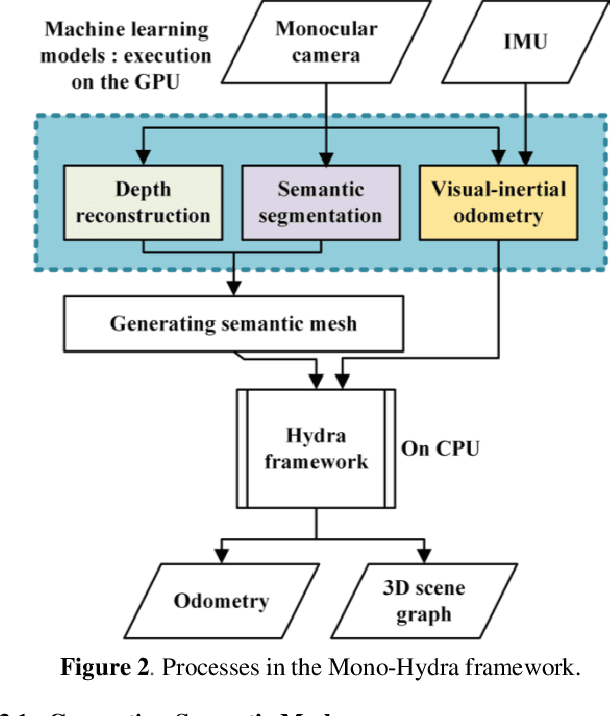

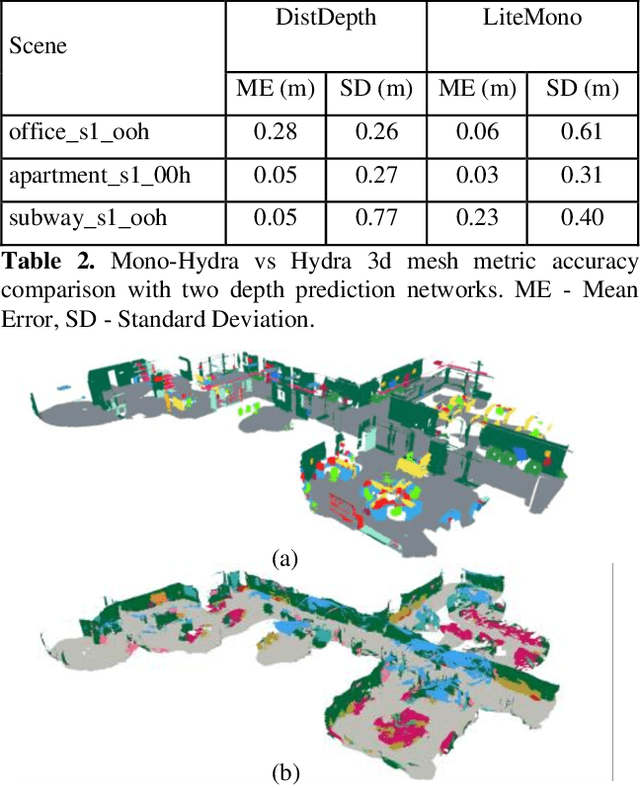

Abstract:The ability of robots to autonomously navigate through 3D environments depends on their comprehension of spatial concepts, ranging from low-level geometry to high-level semantics, such as objects, places, and buildings. To enable such comprehension, 3D scene graphs have emerged as a robust tool for representing the environment as a layered graph of concepts and their relationships. However, building these representations using monocular vision systems in real-time remains a difficult task that has not been explored in depth. This paper puts forth a real-time spatial perception system Mono-Hydra, combining a monocular camera and an IMU sensor setup, focusing on indoor scenarios. However, the proposed approach is adaptable to outdoor applications, offering flexibility in its potential uses. The system employs a suite of deep learning algorithms to derive depth and semantics. It uses a robocentric visual-inertial odometry (VIO) algorithm based on square-root information, thereby ensuring consistent visual odometry with an IMU and a monocular camera. This system achieves sub-20 cm error in real-time processing at 15 fps, enabling real-time 3D scene graph construction using a laptop GPU (NVIDIA 3080). This enhances decision-making efficiency and effectiveness in simple camera setups, augmenting robotic system agility. We make Mono-Hydra publicly available at: https://github.com/UAV-Centre-ITC/Mono_Hydra

Object Dimension Extraction for Environment Mapping with Low Cost Cameras Fused with Laser Ranging

Feb 01, 2023

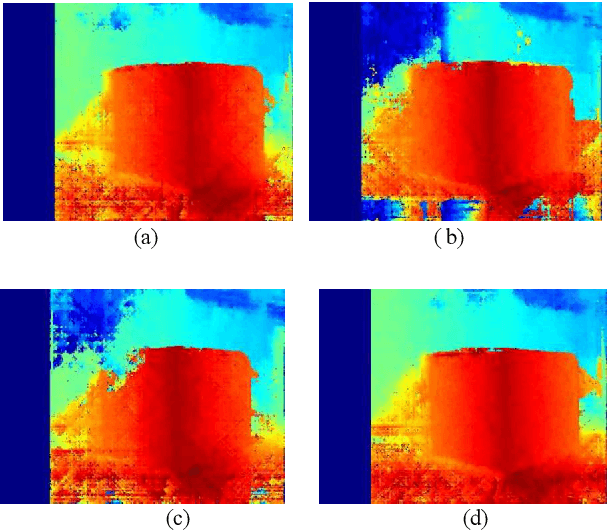

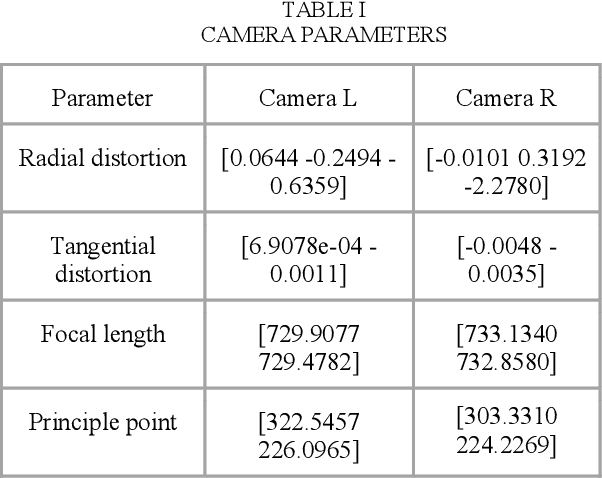

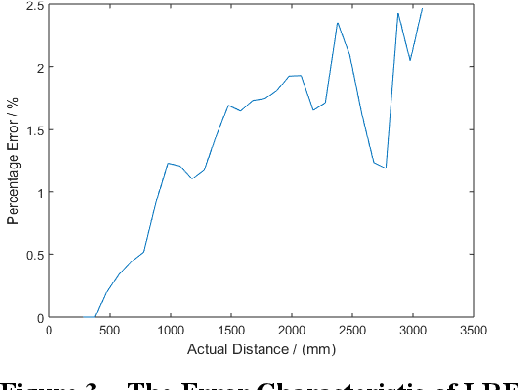

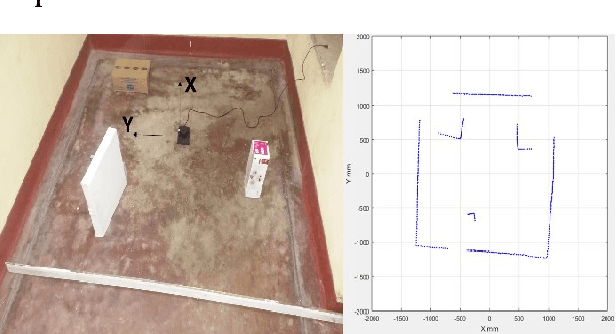

Abstract:It is essential to have a method to map an unknown terrain for various applications. For places where human access is not possible, a method should be proposed to identify the environment. Exploration, disaster relief, transportation and many other purposes would be convenient if a map of the environment is available. Replicating the human vision system using stereo cameras would be an optimum solution. In this work, we have used laser ranging based technique fused with stereo cameras to extract dimension of objects for mapping. The distortions were calibrated using mathematical model of the camera. By means of Semi Global Block Matching [1] disparity map was generated and reduces the noise using novel noise reduction method of disparity map by dilation. The Data from the Laser Range Finder (LRF) and noise reduced vision data has been used to identify the object parameters.

Laser Ranging Based Intelligent System for Unknown Environment Mapping

Feb 01, 2023

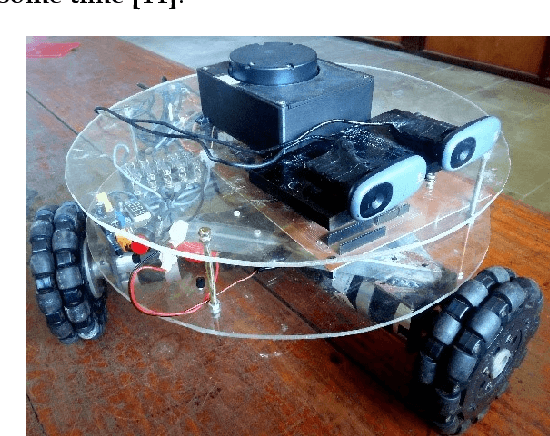

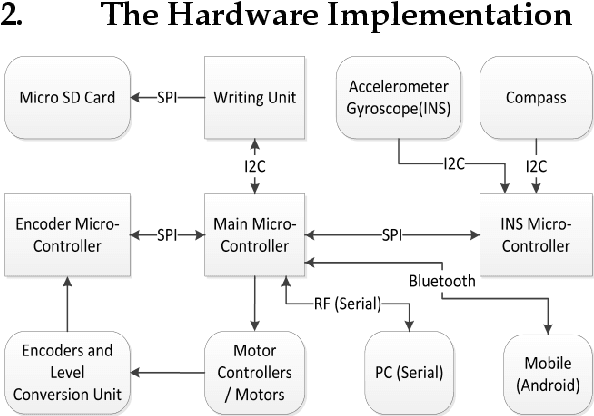

Abstract:This work describes the implementation of a simple and computationally efficient Intelligent Navigation System (INS) for autonomous systems used in areas where human access is impossible. The system uses Laser Range Finder (LRF) readings as input, making it suitable for mobile platform implementation. The INS pre-processes the LRF readings to remove noise and determines an obstacle-free path for mapping. The system's localization method uses a similarity transform and particle filter. The system was tested in artificially generated environments and emulated in real-time with real-environment data. The system was then implemented in a Raspberry Pi3 on a 3WD Omni-directional mobile platform and tested in real environments. The system was able to generate an accurate 2D map of the area. The proposed methodology was shown to be efficient through a comparative analysis of execution time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge