Touradj Erahimi

Towards Visual Saliency Explanations of Face Recognition

May 15, 2023

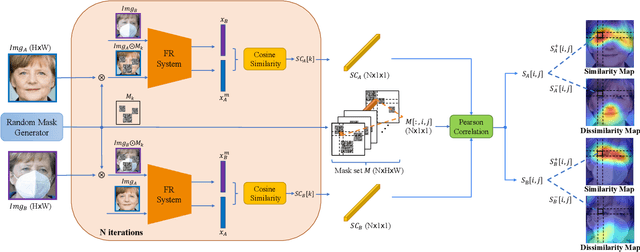

Abstract:Deep convolutional neural networks have been pushing the frontier of face recognition (FR) techniques in the past years. Despite the high accuracy, they are often criticized for lacking explainability. There has been an increasing demand for understanding the decision-making process of deep face recognition systems. Recent studies have investigated using visual saliency maps as an explanation, but they often lack a discussion and analysis in the context of face recognition. This paper conceives a new explanation framework for face recognition. It starts by providing a new definition of the saliency-based explanation method, which focuses on the decisions made by the deep FR model. Then, a novel correlation-based RISE algorithm (CorrRISE) is proposed to produce saliency maps, which reveal both the similar and dissimilar regions of any given pair of face images. Besides, two evaluation metrics are designed to measure the performance of general visual saliency explanation methods in face recognition. Consequently, substantial visual and quantitative results have shown that the proposed method consistently outperforms other explainable face recognition approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge