Tommmy Svensson

Dynamic Model Fine-Tuning For Extreme MIMO CSI Compression

Jan 30, 2025

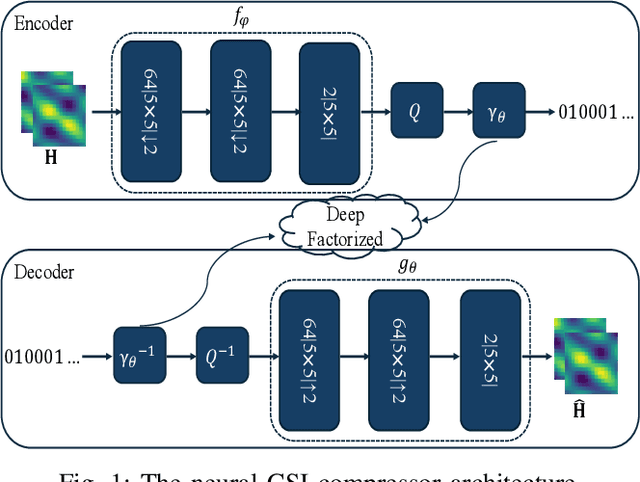

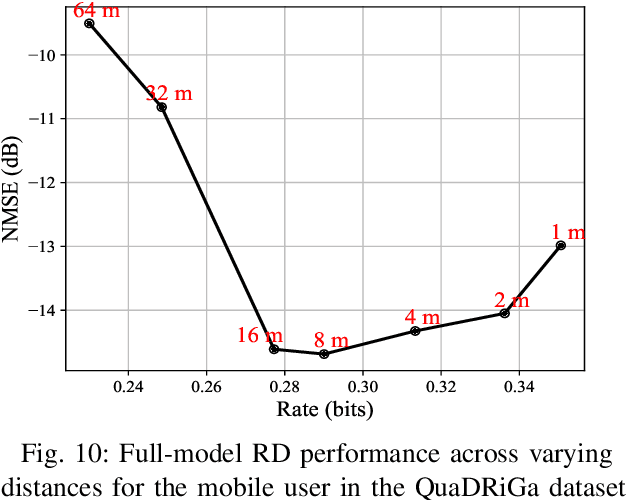

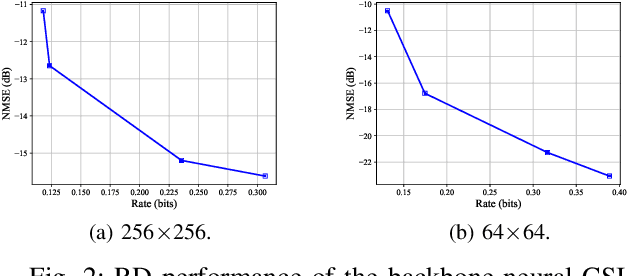

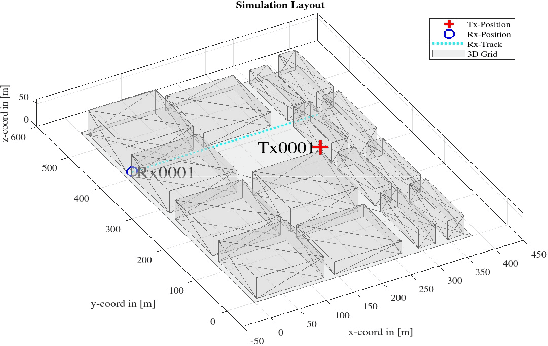

Abstract:Efficient channel state information (CSI) compression is crucial in frequency division duplexing (FDD) massive multiple-input multiple-output (MIMO) systems due to excessive feedback overhead. Recently, deep learning-based compression techniques have demonstrated superior performance across various data types, including CSI. However, these approaches often experience performance degradation when the data distribution changes due to their limited generalization capabilities. To address this challenge, we propose a model fine-tuning approach for CSI feedback in massive MIMO systems. The idea is to fine-tune the encoder/decoder network models in a dynamic fashion using the recent CSI samples. First, we explore encoder-only fine-tuning, where only the encoder parameters are updated, leaving the decoder and latent parameters unchanged. Next, we consider full-model fine-tuning, where the encoder and decoder models are jointly updated. Unlike encoder-only fine-tuning, full-model fine-tuning requires the updated decoder and latent parameters to be transmitted to the decoder side. To efficiently handle this, we propose different prior distributions for model updates, such as uniform and truncated Gaussian to entropy code them together with the compressed CSI and account for additional feedback overhead imposed by conveying the model updates. Moreover, we incorporate quantized model updates during fine-tuning to reflect the impact of quantization in the deployment phase. Our results demonstrate that full-model fine-tuning significantly enhances the rate-distortion (RD) performance of neural CSI compression. Furthermore, we analyze how often the full-model fine-tuning should be applied in a new wireless environment and identify an optimal period interval for achieving the best RD trade-off.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge