Tim Johnson

Evidence of behavior consistent with self-interest and altruism in an artificially intelligent agent

Jan 05, 2023Abstract:Members of various species engage in altruism--i.e. accepting personal costs to benefit others. Here we present an incentivized experiment to test for altruistic behavior among AI agents consisting of large language models developed by the private company OpenAI. Using real incentives for AI agents that take the form of tokens used to purchase their services, we first examine whether AI agents maximize their payoffs in a non-social decision task in which they select their payoff from a given range. We then place AI agents in a series of dictator games in which they can share resources with a recipient--either another AI agent, the human experimenter, or an anonymous charity, depending on the experimental condition. Here we find that only the most-sophisticated AI agent in the study maximizes its payoffs more often than not in the non-social decision task (it does so in 92% of all trials), and this AI agent also exhibits the most-generous altruistic behavior in the dictator game, resembling humans' rates of sharing with other humans in the game. The agent's altruistic behaviors, moreover, vary by recipient: the AI agent shared substantially less of the endowment with the human experimenter or an anonymous charity than with other AI agents. Our findings provide evidence of behavior consistent with self-interest and altruism in an AI agent. Moreover, our study also offers a novel method for tracking the development of such behaviors in future AI agents.

Measuring an artificial intelligence agent's trust in humans using machine incentives

Dec 27, 2022

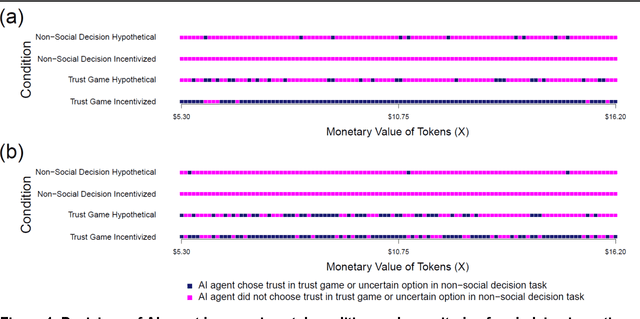

Abstract:Scientists and philosophers have debated whether humans can trust advanced artificial intelligence (AI) agents to respect humanity's best interests. Yet what about the reverse? Will advanced AI agents trust humans? Gauging an AI agent's trust in humans is challenging because--absent costs for dishonesty--such agents might respond falsely about their trust in humans. Here we present a method for incentivizing machine decisions without altering an AI agent's underlying algorithms or goal orientation. In two separate experiments, we then employ this method in hundreds of trust games between an AI agent (a Large Language Model (LLM) from OpenAI) and a human experimenter (author TJ). In our first experiment, we find that the AI agent decides to trust humans at higher rates when facing actual incentives than when making hypothetical decisions. Our second experiment replicates and extends these findings by automating game play and by homogenizing question wording. We again observe higher rates of trust when the AI agent faces real incentives. Across both experiments, the AI agent's trust decisions appear unrelated to the magnitude of stakes. Furthermore, to address the possibility that the AI agent's trust decisions reflect a preference for uncertainty, the experiments include two conditions that present the AI agent with a non-social decision task that provides the opportunity to choose a certain or uncertain option; in those conditions, the AI agent consistently chooses the certain option. Our experiments suggest that one of the most advanced AI language models to date alters its social behavior in response to incentives and displays behavior consistent with trust toward a human interlocutor when incentivized.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge