Thuy Ngoc Nguyen

Dynamic Theory of Mind as a Temporal Memory Problem: Evidence from Large Language Models

Mar 15, 2026Abstract:Theory of Mind (ToM) is central to social cognition and human-AI interaction, and Large Language Models (LLMs) have been used to help understand and represent ToM. However, most evaluations treat ToM as a static judgment at a single moment, primarily relying on tests of false beliefs. This overlooks a key dynamic dimension of ToM: the ability to represent, update, and retrieve others' beliefs over time. We investigate dynamic ToM as a temporally extended representational memory problem, asking whether LLMs can track belief trajectories across interactions rather than only inferring current beliefs. We introduce DToM-Track, an evaluation framework to investigate temporal belief reasoning in controlled multiturn conversations, testing the recall of beliefs held prior to an update, the inference of current beliefs, and the detection of belief change. Using LLMs as computational probes, we find a consistent asymmetry: models reliably infer an agent's current belief but struggle to maintain and retrieve prior belief states once updates occur. This pattern persists across LLM model families and scales, and is consistent with recency bias and interference effects well documented in cognitive science. These results suggest that tracking belief trajectories over time poses a distinct challenge beyond classical false-belief reasoning. By framing ToM as a problem of temporal representation and retrieval, this work connects ToM to core cognitive mechanisms of memory and interference and exposes the implications for LLM models of social reasoning in extended human-AI interactions.

Measuring Implicit Spatial Coordination in Teams: Effects on Collective Intelligence and Performance

Sep 11, 2025

Abstract:Coordinated teamwork is essential in fast-paced decision-making environments that require dynamic adaptation, often without an opportunity for explicit communication. Although implicit coordination has been extensively considered in the existing literature, the majority of work has focused on co-located, synchronous teamwork (such as sports teams) or, in distributed teams, primarily on coordination of knowledge work. However, many teams (firefighters, military, law enforcement, emergency response) must coordinate their movements in physical space without the benefit of visual cues or extensive explicit communication. This paper investigates how three dimensions of spatial coordination, namely exploration diversity, movement specialization, and adaptive spatial proximity, influence team performance in a collaborative online search and rescue task where explicit communication is restricted and team members rely on movement patterns to infer others' intentions and coordinate actions. Our metrics capture the relational aspects of teamwork by measuring spatial proximity, distribution patterns, and alignment of movements within shared environments. We analyze data from 34 four-person teams (136 participants) assigned to specialized roles in a search and rescue task. Results show that spatial specialization positively predicts performance, while adaptive spatial proximity exhibits a marginal inverted U-shaped relationship, suggesting moderate levels of adaptation are optimal. Furthermore, the temporal dynamics of these metrics differentiate high- from low-performing teams over time. These findings provide insights into implicit spatial coordination in role-based teamwork and highlight the importance of balanced adaptive strategies, with implications for training and AI-assisted team support systems.

Learning in Cooperative Multiagent Systems Using Cognitive and Machine Models

Aug 18, 2023

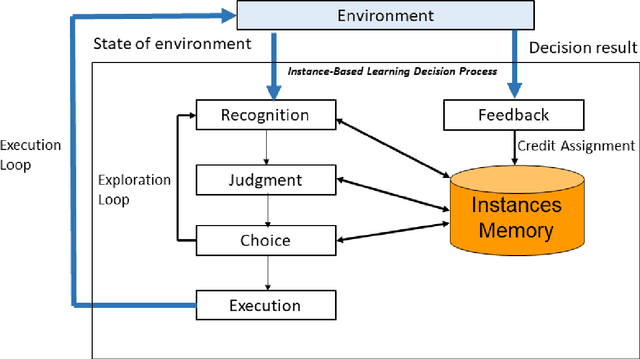

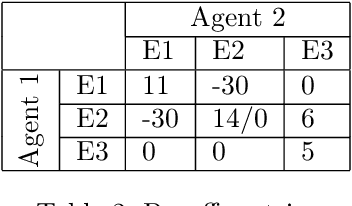

Abstract:Developing effective Multi-Agent Systems (MAS) is critical for many applications requiring collaboration and coordination with humans. Despite the rapid advance of Multi-Agent Deep Reinforcement Learning (MADRL) in cooperative MAS, one major challenge is the simultaneous learning and interaction of independent agents in dynamic environments in the presence of stochastic rewards. State-of-the-art MADRL models struggle to perform well in Coordinated Multi-agent Object Transportation Problems (CMOTPs), wherein agents must coordinate with each other and learn from stochastic rewards. In contrast, humans often learn rapidly to adapt to nonstationary environments that require coordination among people. In this paper, motivated by the demonstrated ability of cognitive models based on Instance-Based Learning Theory (IBLT) to capture human decisions in many dynamic decision making tasks, we propose three variants of Multi-Agent IBL models (MAIBL). The idea of these MAIBL algorithms is to combine the cognitive mechanisms of IBLT and the techniques of MADRL models to deal with coordination MAS in stochastic environments from the perspective of independent learners. We demonstrate that the MAIBL models exhibit faster learning and achieve better coordination in a dynamic CMOTP task with various settings of stochastic rewards compared to current MADRL models. We discuss the benefits of integrating cognitive insights into MADRL models.

Credit Assignment: Challenges and Opportunities in Developing Human-like AI Agents

Jul 16, 2023

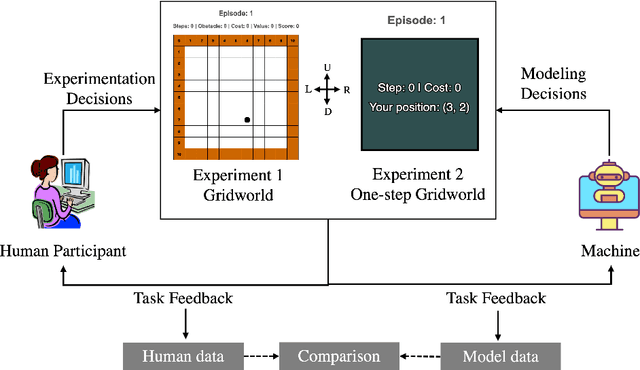

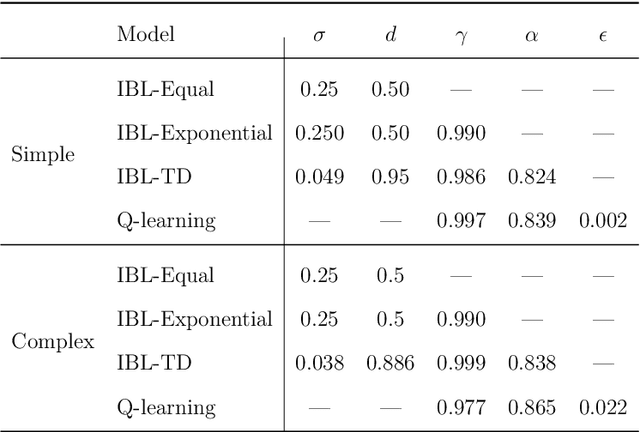

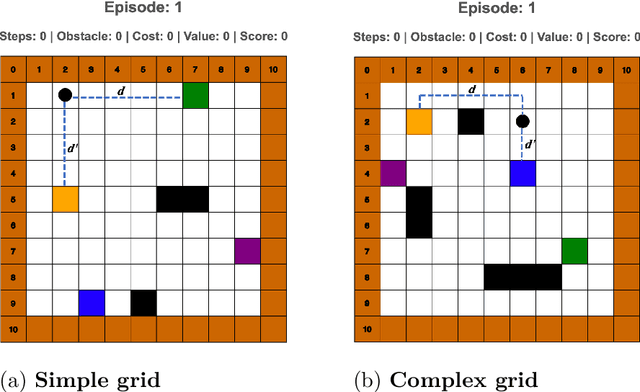

Abstract:Temporal credit assignment is crucial for learning and skill development in natural and artificial intelligence. While computational methods like the TD approach in reinforcement learning have been proposed, it's unclear if they accurately represent how humans handle feedback delays. Cognitive models intend to represent the mental steps by which humans solve problems and perform a number of tasks, but limited research in cognitive science has addressed the credit assignment problem in humans and cognitive models. Our research uses a cognitive model based on a theory of decisions from experience, Instance-Based Learning Theory (IBLT), to test different credit assignment mechanisms in a goal-seeking navigation task with varying levels of decision complexity. Instance-Based Learning (IBL) models simulate the process of making sequential choices with different credit assignment mechanisms, including a new IBL-TD model that combines the IBL decision mechanism with the TD approach. We found that (1) An IBL model that gives equal credit assignment to all decisions is able to match human performance better than other models, including IBL-TD and Q-learning; (2) IBL-TD and Q-learning models underperform compared to humans initially, but eventually, they outperform humans; (3) humans are influenced by decision complexity, while models are not. Our study provides insights into the challenges of capturing human behavior and the potential opportunities to use these models in future AI systems to support human activities.

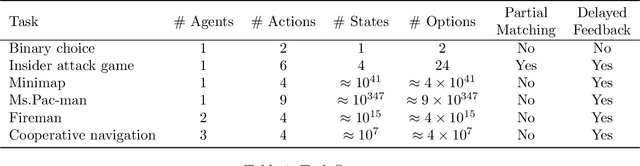

SpeedyIBL: A Solution to the Curse of Exponential Growth in Instance-Based Learning Models of Decisions from Experience

Nov 19, 2021

Abstract:Computational cognitive modeling is a useful methodology to explore and validate theories of human cognitive processes. Often cognitive models are used to simulate the process by which humans perform a task or solve a problem and to make predictions about human behavior. Cognitive models based on Instance-Based Learning (IBL) Theory rely on a formal computational algorithm for dynamic decision making and on a memory mechanism from a well-known cognitive architecture, ACT-R. To advance the computational theory of human decision making and to demonstrate the usefulness of cognitive models in diverse domains, we must address a practical computational problem, the curse of exponential growth, that emerges from memory-based tabular computations. When more observations accumulate, there is an exponential growth of the memory of instances that leads directly to an exponential slow down of the computational time. In this paper, we propose a new Speedy IBL implementation that innovates the mathematics of vectorization and parallel computation over the traditional loop-based approach. Through the implementation of IBL models in many decision games of increasing complexity, we demonstrate the applicability of the regular IBL models and the advantages of their Speedy implementation. Decision games vary in their complexity of decision features and in the number of agents involved in the decision process. The results clearly illustrate that Speedy IBL addresses the curse of exponential growth of memory, reducing the computational time significantly, while maintaining the same level of performance than the traditional implementation of IBL models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge