Thomas Kunz

Unified Emulation-Simulation Training Environment for Autonomous Cyber Agents

Apr 03, 2023

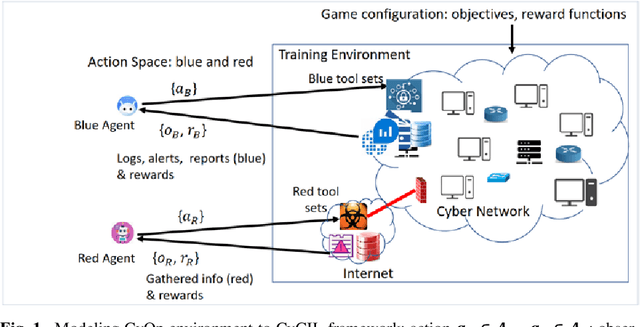

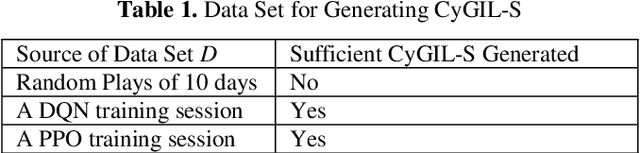

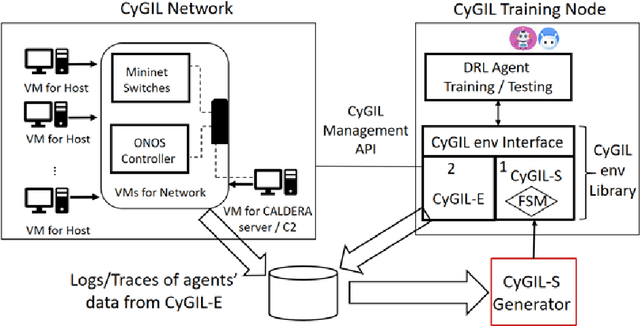

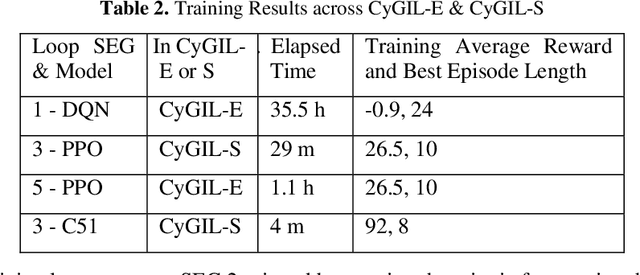

Abstract:Autonomous cyber agents may be developed by applying reinforcement and deep reinforcement learning (RL/DRL), where agents are trained in a representative environment. The training environment must simulate with high-fidelity the network Cyber Operations (CyOp) that the agent aims to explore. Given the complexity of net-work CyOps, a good simulator is difficult to achieve. This work presents a systematic solution to automatically generate a high-fidelity simulator in the Cyber Gym for Intelligent Learning (CyGIL). Through representation learning and continuous learning, CyGIL provides a unified CyOp training environment where an emulated CyGIL-E automatically generates a simulated CyGIL-S. The simulator generation is integrated with the agent training process to further reduce the required agent training time. The agent trained in CyGIL-S is transferrable directly to CyGIL-E showing full transferability to the emulated "real" network. Experimental results are presented to demonstrate the CyGIL training performance. Enabling offline RL, the CyGIL solution presents a promising direction towards sim-to-real for leveraging RL agents in real-world cyber networks.

Enabling A Network AI Gym for Autonomous Cyber Agents

Apr 03, 2023Abstract:This work aims to enable autonomous agents for network cyber operations (CyOps) by applying reinforcement and deep reinforcement learning (RL/DRL). The required RL training environment is particularly challenging, as it must balance the need for high-fidelity, best achieved through real network emulation, with the need for running large numbers of training episodes, best achieved using simulation. A unified training environment, namely the Cyber Gym for Intelligent Learning (CyGIL) is developed where an emulated CyGIL-E automatically generates a simulated CyGIL-S. From preliminary experimental results, CyGIL-S is capable to train agents in minutes compared with the days required in CyGIL-E. The agents trained in CyGIL-S are transferrable directly to CyGIL-E showing full decision proficiency in the emulated "real" network. Enabling offline RL, the CyGIL solution presents a promising direction towards sim-to-real for leveraging RL agents in real-world cyber networks.

A Multiagent CyberBattleSim for RL Cyber Operation Agents

Apr 03, 2023Abstract:Hardening cyber physical assets is both crucial and labor-intensive. Recently, Machine Learning (ML) in general and Reinforcement Learning RL) more specifically has shown great promise to automate tasks that otherwise would require significant human insight/intelligence. The development of autonomous RL agents requires a suitable training environment that allows us to quickly evaluate various alternatives, in particular how to arrange training scenarios that pit attackers and defenders against each other. CyberBattleSim is a training environment that supports the training of red agents, i.e., attackers. We added the capability to train blue agents, i.e., defenders. The paper describes our changes and reports on the results we obtained when training blue agents, either in isolation or jointly with red agents. Our results show that training a blue agent does lead to stronger defenses against attacks. In particular, training a blue agent jointly with a red agent increases the blue agent's capability to thwart sophisticated red agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge