Teresa Arora

Impact of Physical Activity on Sleep:A Deep Learning Based Exploration

Jul 24, 2016

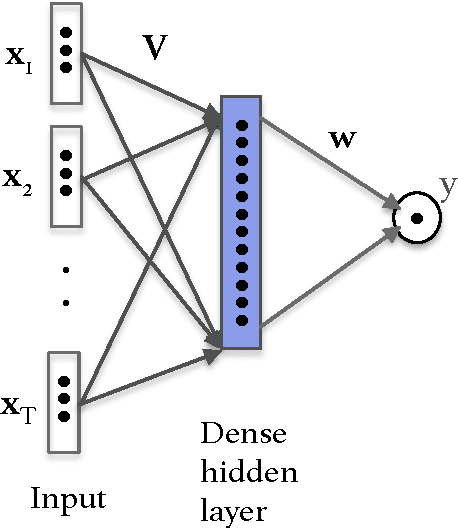

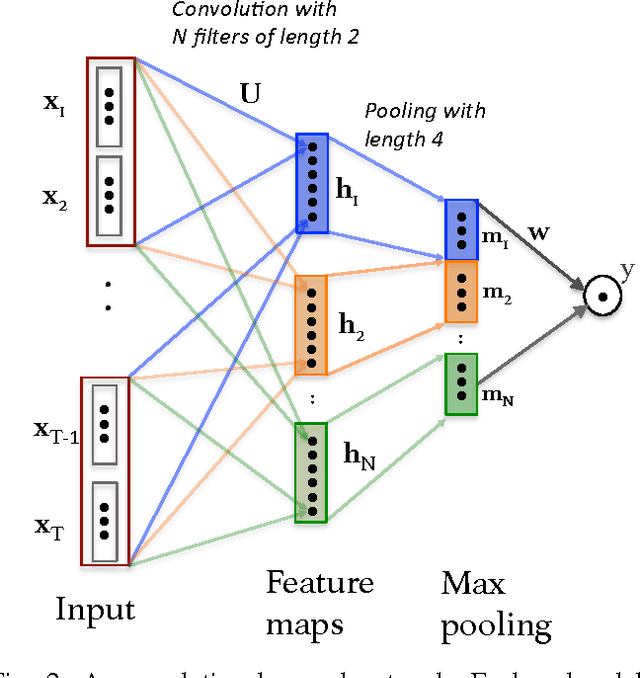

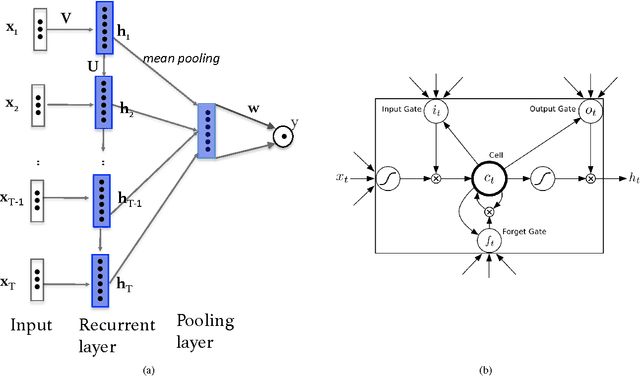

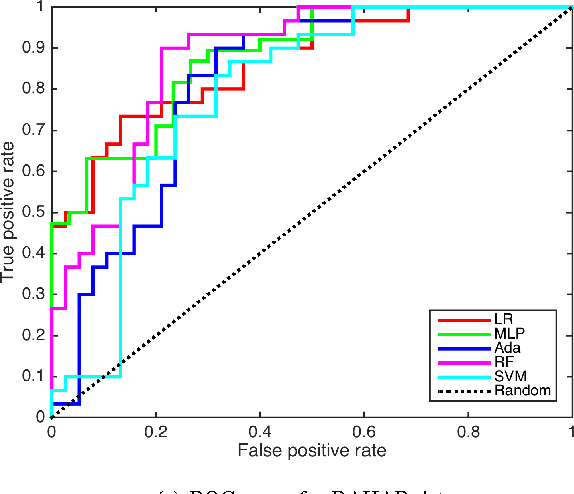

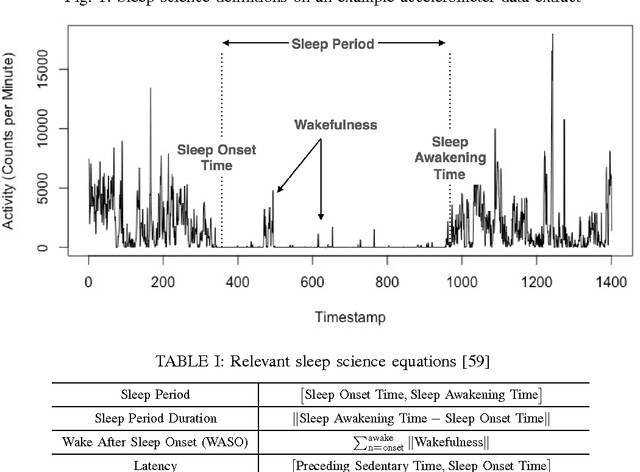

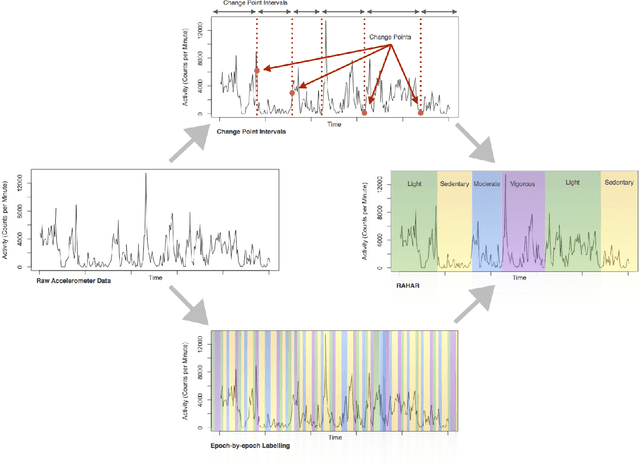

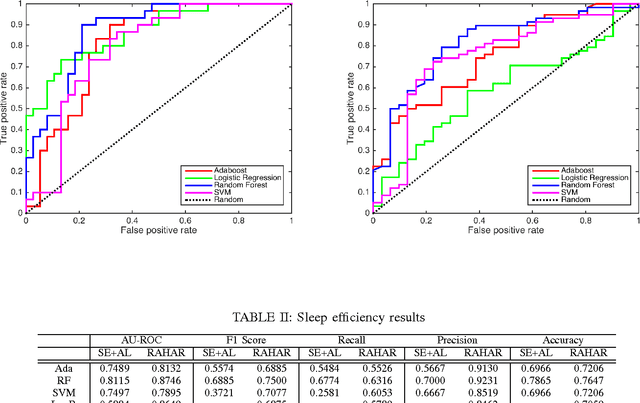

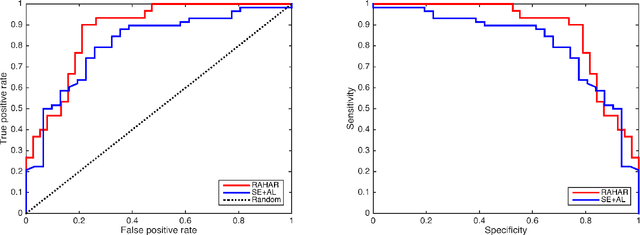

Abstract:The importance of sleep is paramount for maintaining physical, emotional and mental wellbeing. Though the relationship between sleep and physical activity is known to be important, it is not yet fully understood. The explosion in popularity of actigraphy and wearable devices, provides a unique opportunity to understand this relationship. Leveraging this information source requires new tools to be developed to facilitate data-driven research for sleep and activity patient-recommendations. In this paper we explore the use of deep learning to build sleep quality prediction models based on actigraphy data. We first use deep learning as a pure model building device by performing human activity recognition (HAR) on raw sensor data, and using deep learning to build sleep prediction models. We compare the deep learning models with those build using classical approaches, i.e. logistic regression, support vector machines, random forest and adaboost. Secondly, we employ the advantage of deep learning with its ability to handle high dimensional datasets. We explore several deep learning models on the raw wearable sensor output without performing HAR or any other feature extraction. Our results show that using a convolutional neural network on the raw wearables output improves the predictive value of sleep quality from physical activity, by an additional 8% compared to state-of-the-art non-deep learning approaches, which itself shows a 15% improvement over current practice. Moreover, utilizing deep learning on raw data eliminates the need for data pre-processing and simplifies the overall workflow to analyze actigraphy data for sleep and physical activity research.

Robust Automated Human Activity Recognition and its Application to Sleep Research

Jul 19, 2016

Abstract:Human Activity Recognition (HAR) is a powerful tool for understanding human behaviour. Applying HAR to wearable sensors can provide new insights by enriching the feature set in health studies, and enhance the personalisation and effectiveness of health, wellness, and fitness applications. Wearable devices provide an unobtrusive platform for user monitoring, and due to their increasing market penetration, feel intrinsic to the wearer. The integration of these devices in daily life provide a unique opportunity for understanding human health and wellbeing. This is referred to as the "quantified self" movement. The analyses of complex health behaviours such as sleep, traditionally require a time-consuming manual interpretation by experts. This manual work is necessary due to the erratic periodicity and persistent noisiness of human behaviour. In this paper, we present a robust automated human activity recognition algorithm, which we call RAHAR. We test our algorithm in the application area of sleep research by providing a novel framework for evaluating sleep quality and examining the correlation between the aforementioned and an individual's physical activity. Our results improve the state-of-the-art procedure in sleep research by 15 percent for area under ROC and by 30 percent for F1 score on average. However, application of RAHAR is not limited to sleep analysis and can be used for understanding other health problems such as obesity, diabetes, and cardiac diseases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge